Resting-State fMRI Analysis in R#

From Preprocessed Data to Functional Connectivity#

Authors: Giulia Baracchini & Monika Doerig

Date: 13 Jan 2026

License:

Note: If this notebook uses neuroimaging tools from Neurocontainers, those tools retain their original licenses. Please see Neurodesk citation guidelines for details.

Citation and Resources:#

Tools included in this workflow#

R:

R Core Team. (2025). R: A language and environment for statistical computing (Version 4.4.3) [Software]. R Foundation for Statistical Computing. https://www.R-project.org/

Workflows this work is based on#

Original work from Giulia Baracchini:

Dataset#

HCP

Schefer parcellation

Introduction#

This notebook is adapted from Giulia Baracchini’s comprehensive fMRI preprocessing tutorial (available on GitHub), which covers the essential steps of resting-state fMRI analysis from raw data preprocessing through functional connectivity analyses.

The original tutorial provides a complete pipeline covering:

Standard fMRI preprocessing - preparing raw neuroimaging data for analysis

Resting-state fMRI denoising - removing artifacts and noise from the signal

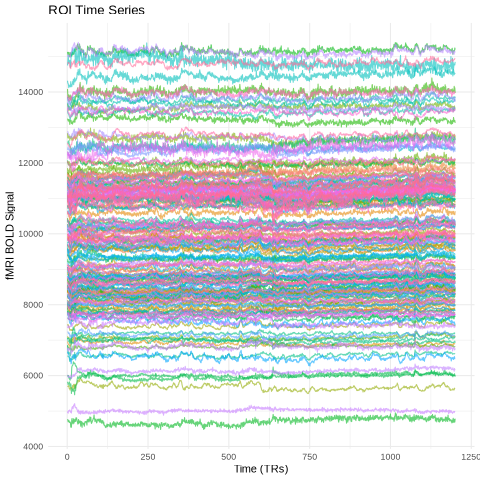

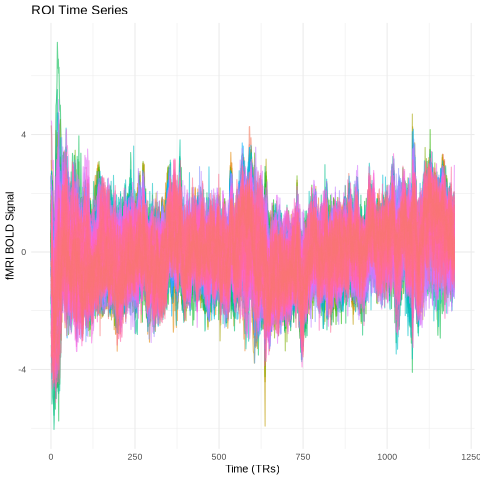

Time-series extraction and parcellation - converting voxel-level data to meaningful brain regions

Functional connectivity analyses - examining statistical relationships between brain regions

What This Notebook Covers#

While the original tutorial works with multiple subjects and uses the Schaefer 200 region-7 network parcellation, this notebook focuses on a single-subject analysis using the Schaefer 200 region-17 network parcellation applied to subject 101309.

Key Concepts#

Parcellation: The process of grouping individual voxels into meaningful brain regions or “parcels.” Instead of analyzing thousands of individual voxels, we average signals within anatomically or functionally defined regions, making our analyses more interpretable and computationally manageable.

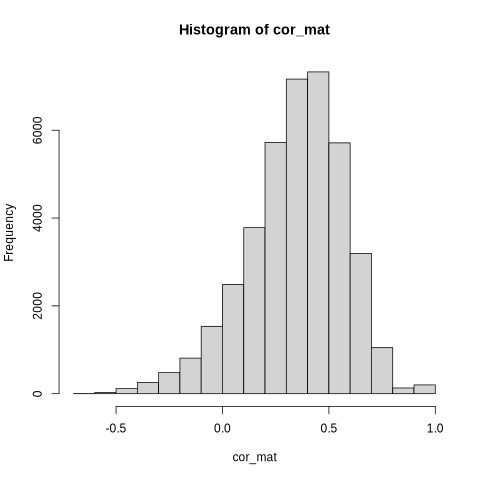

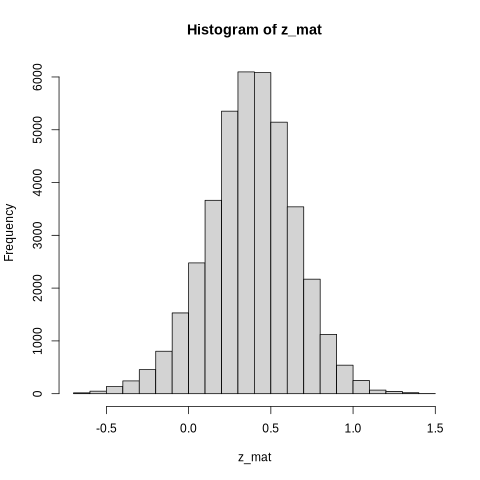

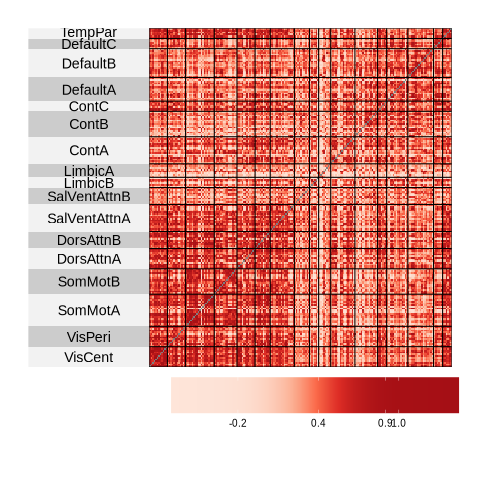

Functional Connectivity (FC): A statistical measure (typically Pearson’s correlation) that quantifies how synchronously different brain regions activate during rest. The result is a region × region matrix where each entry represents the strength of correlation between two brain areas.

Analysis Pipeline#

This notebook focuses on the analysis phase using already preprocessed and parcellated data. Starting with clean time series data from 200 brain regions, we implement:

Data normalization - standardizing time series signals across regions

Functional connectivity calculation - computing correlation matrices between brain regions

Fisher z-transformation - normalizing correlation values for statistical analysis

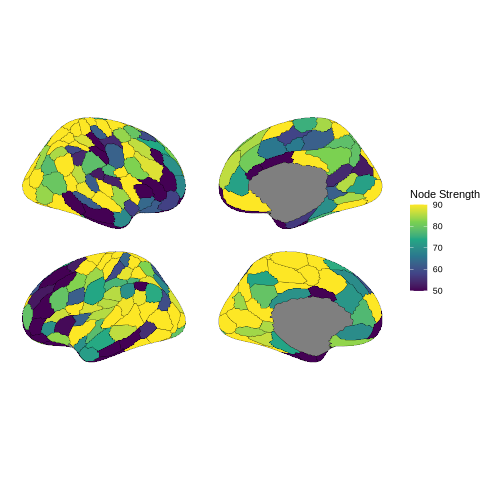

Network visualization - creating heatmaps and brain plots to visualize connectivity patterns

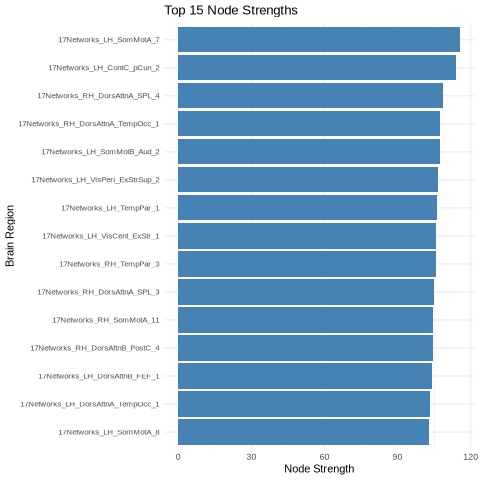

Nodal strength analysis - quantifying each region’s overall connectivity

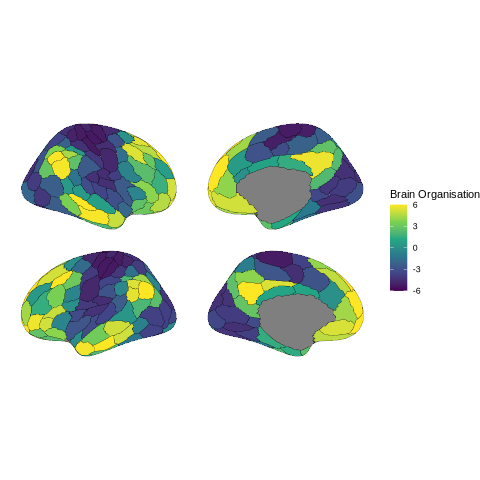

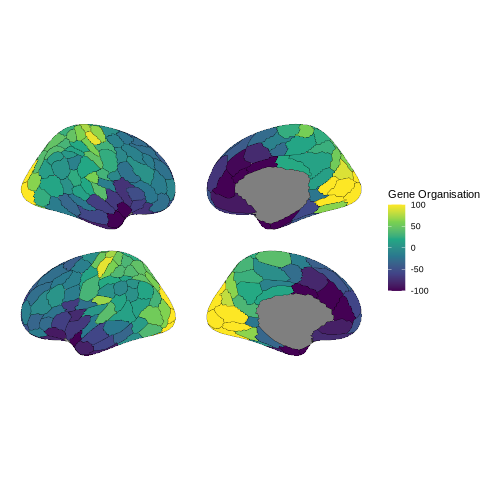

Relationships to other measures of brain organisation - relating connectivity patterns to brain organization gradients and gene expression patterns

The Schaefer parcellation we’re using divides the brain into 200 regions across 17 functional networks, providing a good balance between spatial resolution and interpretability for resting-state connectivity analyses.

Running R in Jupyter with Python Kernel#

⚠️ Run R in Jupyter Notebook: This notebook uses R magic commands (%%R) to run R code within a Python kernel environment. This approach offers several advantages:

- No kernel switching required - all code runs in the Python kernel

- Seamless Python ↔ R integration - easy data exchange between languages

- Fully automated setup - no manual kernel installation needed

Note: Alternatively, Jupyter supports native R kernels, but the magic command approach keeps everything within the Python kernel for simpler workflow management.

Setup Steps#

1. Install R runtime and packages via mamba:

# Install R runtime, Python↔R bridge, and geospatial R packages via conda

# Note: r-base provides the R runtime; we avoid r-essentials (80+ packages) to prevent solver conflicts

!mamba install -c conda-forge r-base rpy2 r-sf r-units r-s2 r-ggplot2 -y -q

# Set env vars before loading rpy2

# Set PROJ database path so sf can find coordinate reference systems

# (needed when r-sf is installed via conda)

import os

proj_path = os.path.join(os.environ.get("CONDA_PREFIX", "/opt/conda"), "share", "proj")

os.environ["PROJ_LIB"] = proj_path

os.environ["PROJ_DATA"] = proj_path

2. Enable %%R magic commands:

We only need to run it once for the first time. After these installations, the Jupyter Notebook now supports both Python 3 and R programming languages.

%load_ext rpy2.ipython

3. Install R packages from CRAN and r-universe:

%%R

# ==============================================================================

# Setup a User-Specific R Library and Install Packages

# ==============================================================================

# 1. Define and create a personal library path

user_lib <- "~/R/library"

dir.create(user_lib, recursive = TRUE, showWarnings = FALSE)

# 2. Add the personal library to R's library search path

.libPaths(c(user_lib, .libPaths()))

# 3. Set repositories: CRAN + ggseg r-universe

options(repos = c(

ggseg = "https://ggseg.r-universe.dev",

CRAN = "https://cloud.r-project.org"

))

# 4. Install required packages from CRAN

install.packages(c("remotes", "ggplot2", "dplyr", "tidyr", "superheat", "knitr"))

# 5. Install ggseg from r-universe

install.packages("ggseg")

# 6. Install ggsegSchaefer - try r-universe first, fall back to GitHub

# (r-universe may not have binaries for all platforms, e.g. linux/arm64)

install.packages("ggsegSchaefer")

if (!requireNamespace("ggsegSchaefer", quietly = TRUE)) {

message("ggsegSchaefer not found via r-universe, installing from GitHub...")

remotes::install_github("ggseg/ggsegSchaefer", upgrade = "never")

}

# 7. Verify the library path

print("R packages will be installed in and loaded from:")

print(.libPaths())

x86_64-conda-linux-gnu-cc -I"/opt/conda/lib/R/include" -DNDEBUG -DNDEBUG -D_FORTIFY_SOURCE=2 -O2 -isystem /opt/conda/include -I/opt/conda/include -Wl,-rpath-link,/opt/conda/lib -Iutf8lite/src -fpic -march=nocona -mtune=haswell -ftree-vectorize -fPIC -fstack-protector-strong -fno-plt -O2 -ffunction-sections -pipe -isystem /opt/conda/include -fdebug-prefix-map=/home/conda/feedstock_root/build_artifacts/r-base-split_1773745610701/work=/usr/local/src/conda/r-base-4.5.3 -fdebug-prefix-map=/opt/conda=/usr/local/src/conda-prefix -c as_utf8.c -o as_utf8.o

x86_64-conda-linux-gnu-cc -I"/opt/conda/lib/R/include" -DNDEBUG -DNDEBUG -D_FORTIFY_SOURCE=2 -O2 -isystem /opt/conda/include -I/opt/conda/include -Wl,-rpath-link,/opt/conda/lib -Iutf8lite/src -fpic -march=nocona -mtune=haswell -ftree-vectorize -fPIC -fstack-protector-strong -fno-plt -O2 -ffunction-sections -pipe -isystem /opt/conda/include -fdebug-prefix-map=/home/conda/feedstock_root/build_artifacts/r-base-split_1773745610701/work=/usr/local/src/conda/r-base-4.5.3 -fdebug-prefix-map=/opt/conda=/usr/local/src/conda-prefix -c bytes.c -o bytes.o

x86_64-conda-linux-gnu-cc -I"/opt/conda/lib/R/include" -DNDEBUG -DNDEBUG -D_FORTIFY_SOURCE=2 -O2 -isystem /opt/conda/include -I/opt/conda/include -Wl,-rpath-link,/opt/conda/lib -Iutf8lite/src -fpic -march=nocona -mtune=haswell -ftree-vectorize -fPIC -fstack-protector-strong -fno-plt -O2 -ffunction-sections -pipe -isystem /opt/conda/include -fdebug-prefix-map=/home/conda/feedstock_root/build_artifacts/r-base-split_1773745610701/work=/usr/local/src/conda/r-base-4.5.3 -fdebug-prefix-map=/opt/conda=/usr/local/src/conda-prefix -c context.c -o context.o

x86_64-conda-linux-gnu-cc -I"/opt/conda/lib/R/include" -DNDEBUG -DNDEBUG -D_FORTIFY_SOURCE=2 -O2 -isystem /opt/conda/include -I/opt/conda/include -Wl,-rpath-link,/opt/conda/lib -Iutf8lite/src -fpic -march=nocona -mtune=haswell -ftree-vectorize -fPIC -fstack-protector-strong -fno-plt -O2 -ffunction-sections -pipe -isystem /opt/conda/include -fdebug-prefix-map=/home/conda/feedstock_root/build_artifacts/r-base-split_1773745610701/work=/usr/local/src/conda/r-base-4.5.3 -fdebug-prefix-map=/opt/conda=/usr/local/src/conda-prefix -c init.c -o init.o

x86_64-conda-linux-gnu-cc -I"/opt/conda/lib/R/include" -DNDEBUG -DNDEBUG -D_FORTIFY_SOURCE=2 -O2 -isystem /opt/conda/include -I/opt/conda/include -Wl,-rpath-link,/opt/conda/lib -Iutf8lite/src -fpic -march=nocona -mtune=haswell -ftree-vectorize -fPIC -fstack-protector-strong -fno-plt -O2 -ffunction-sections -pipe -isystem /opt/conda/include -fdebug-prefix-map=/home/conda/feedstock_root/build_artifacts/r-base-split_1773745610701/work=/usr/local/src/conda/r-base-4.5.3 -fdebug-prefix-map=/opt/conda=/usr/local/src/conda-prefix -c render.c -o render.o

x86_64-conda-linux-gnu-cc -I"/opt/conda/lib/R/include" -DNDEBUG -DNDEBUG -D_FORTIFY_SOURCE=2 -O2 -isystem /opt/conda/include -I/opt/conda/include -Wl,-rpath-link,/opt/conda/lib -Iutf8lite/src -fpic -march=nocona -mtune=haswell -ftree-vectorize -fPIC -fstack-protector-strong -fno-plt -O2 -ffunction-sections -pipe -isystem /opt/conda/include -fdebug-prefix-map=/home/conda/feedstock_root/build_artifacts/r-base-split_1773745610701/work=/usr/local/src/conda/r-base-4.5.3 -fdebug-prefix-map=/opt/conda=/usr/local/src/conda-prefix -c render_table.c -o render_table.o

x86_64-conda-linux-gnu-cc -I"/opt/conda/lib/R/include" -DNDEBUG -DNDEBUG -D_FORTIFY_SOURCE=2 -O2 -isystem /opt/conda/include -I/opt/conda/include -Wl,-rpath-link,/opt/conda/lib -Iutf8lite/src -fpic -march=nocona -mtune=haswell -ftree-vectorize -fPIC -fstack-protector-strong -fno-plt -O2 -ffunction-sections -pipe -isystem /opt/conda/include -fdebug-prefix-map=/home/conda/feedstock_root/build_artifacts/r-base-split_1773745610701/work=/usr/local/src/conda/r-base-4.5.3 -fdebug-prefix-map=/opt/conda=/usr/local/src/conda-prefix -c string.c -o string.o

x86_64-conda-linux-gnu-cc -I"/opt/conda/lib/R/include" -DNDEBUG -DNDEBUG -D_FORTIFY_SOURCE=2 -O2 -isystem /opt/conda/include -I/opt/conda/include -Wl,-rpath-link,/opt/conda/lib -Iutf8lite/src -fpic -march=nocona -mtune=haswell -ftree-vectorize -fPIC -fstack-protector-strong -fno-plt -O2 -ffunction-sections -pipe -isystem /opt/conda/include -fdebug-prefix-map=/home/conda/feedstock_root/build_artifacts/r-base-split_1773745610701/work=/usr/local/src/conda/r-base-4.5.3 -fdebug-prefix-map=/opt/conda=/usr/local/src/conda-prefix -c text.c -o text.o

x86_64-conda-linux-gnu-cc -I"/opt/conda/lib/R/include" -DNDEBUG -DNDEBUG -D_FORTIFY_SOURCE=2 -O2 -isystem /opt/conda/include -I/opt/conda/include -Wl,-rpath-link,/opt/conda/lib -Iutf8lite/src -fpic -march=nocona -mtune=haswell -ftree-vectorize -fPIC -fstack-protector-strong -fno-plt -O2 -ffunction-sections -pipe -isystem /opt/conda/include -fdebug-prefix-map=/home/conda/feedstock_root/build_artifacts/r-base-split_1773745610701/work=/usr/local/src/conda/r-base-4.5.3 -fdebug-prefix-map=/opt/conda=/usr/local/src/conda-prefix -c utf8_encode.c -o utf8_encode.o

x86_64-conda-linux-gnu-cc -I"/opt/conda/lib/R/include" -DNDEBUG -DNDEBUG -D_FORTIFY_SOURCE=2 -O2 -isystem /opt/conda/include -I/opt/conda/include -Wl,-rpath-link,/opt/conda/lib -Iutf8lite/src -fpic -march=nocona -mtune=haswell -ftree-vectorize -fPIC -fstack-protector-strong -fno-plt -O2 -ffunction-sections -pipe -isystem /opt/conda/include -fdebug-prefix-map=/home/conda/feedstock_root/build_artifacts/r-base-split_1773745610701/work=/usr/local/src/conda/r-base-4.5.3 -fdebug-prefix-map=/opt/conda=/usr/local/src/conda-prefix -c utf8_format.c -o utf8_format.o

x86_64-conda-linux-gnu-cc -I"/opt/conda/lib/R/include" -DNDEBUG -DNDEBUG -D_FORTIFY_SOURCE=2 -O2 -isystem /opt/conda/include -I/opt/conda/include -Wl,-rpath-link,/opt/conda/lib -Iutf8lite/src -fpic -march=nocona -mtune=haswell -ftree-vectorize -fPIC -fstack-protector-strong -fno-plt -O2 -ffunction-sections -pipe -isystem /opt/conda/include -fdebug-prefix-map=/home/conda/feedstock_root/build_artifacts/r-base-split_1773745610701/work=/usr/local/src/conda/r-base-4.5.3 -fdebug-prefix-map=/opt/conda=/usr/local/src/conda-prefix -c utf8_normalize.c -o utf8_normalize.o

x86_64-conda-linux-gnu-cc -I"/opt/conda/lib/R/include" -DNDEBUG -DNDEBUG -D_FORTIFY_SOURCE=2 -O2 -isystem /opt/conda/include -I/opt/conda/include -Wl,-rpath-link,/opt/conda/lib -Iutf8lite/src -fpic -march=nocona -mtune=haswell -ftree-vectorize -fPIC -fstack-protector-strong -fno-plt -O2 -ffunction-sections -pipe -isystem /opt/conda/include -fdebug-prefix-map=/home/conda/feedstock_root/build_artifacts/r-base-split_1773745610701/work=/usr/local/src/conda/r-base-4.5.3 -fdebug-prefix-map=/opt/conda=/usr/local/src/conda-prefix -c utf8_valid.c -o utf8_valid.o

x86_64-conda-linux-gnu-cc -I"/opt/conda/lib/R/include" -DNDEBUG -DNDEBUG -D_FORTIFY_SOURCE=2 -O2 -isystem /opt/conda/include -I/opt/conda/include -Wl,-rpath-link,/opt/conda/lib -Iutf8lite/src -fpic -march=nocona -mtune=haswell -ftree-vectorize -fPIC -fstack-protector-strong -fno-plt -O2 -ffunction-sections -pipe -isystem /opt/conda/include -fdebug-prefix-map=/home/conda/feedstock_root/build_artifacts/r-base-split_1773745610701/work=/usr/local/src/conda/r-base-4.5.3 -fdebug-prefix-map=/opt/conda=/usr/local/src/conda-prefix -c utf8_width.c -o utf8_width.o

x86_64-conda-linux-gnu-cc -I"/opt/conda/lib/R/include" -DNDEBUG -DNDEBUG -D_FORTIFY_SOURCE=2 -O2 -isystem /opt/conda/include -I/opt/conda/include -Wl,-rpath-link,/opt/conda/lib -Iutf8lite/src -fpic -march=nocona -mtune=haswell -ftree-vectorize -fPIC -fstack-protector-strong -fno-plt -O2 -ffunction-sections -pipe -isystem /opt/conda/include -fdebug-prefix-map=/home/conda/feedstock_root/build_artifacts/r-base-split_1773745610701/work=/usr/local/src/conda/r-base-4.5.3 -fdebug-prefix-map=/opt/conda=/usr/local/src/conda-prefix -c util.c -o util.o

x86_64-conda-linux-gnu-cc -I"/opt/conda/lib/R/include" -DNDEBUG -DNDEBUG -D_FORTIFY_SOURCE=2 -O2 -isystem /opt/conda/include -I/opt/conda/include -Wl,-rpath-link,/opt/conda/lib -Iutf8lite/src -fpic -march=nocona -mtune=haswell -ftree-vectorize -fPIC -fstack-protector-strong -fno-plt -O2 -ffunction-sections -pipe -isystem /opt/conda/include -fdebug-prefix-map=/home/conda/feedstock_root/build_artifacts/r-base-split_1773745610701/work=/usr/local/src/conda/r-base-4.5.3 -fdebug-prefix-map=/opt/conda=/usr/local/src/conda-prefix -c utf8lite/src/array.c -o utf8lite/src/array.o

x86_64-conda-linux-gnu-cc -I"/opt/conda/lib/R/include" -DNDEBUG -DNDEBUG -D_FORTIFY_SOURCE=2 -O2 -isystem /opt/conda/include -I/opt/conda/include -Wl,-rpath-link,/opt/conda/lib -Iutf8lite/src -fpic -march=nocona -mtune=haswell -ftree-vectorize -fPIC -fstack-protector-strong -fno-plt -O2 -ffunction-sections -pipe -isystem /opt/conda/include -fdebug-prefix-map=/home/conda/feedstock_root/build_artifacts/r-base-split_1773745610701/work=/usr/local/src/conda/r-base-4.5.3 -fdebug-prefix-map=/opt/conda=/usr/local/src/conda-prefix -c utf8lite/src/char.c -o utf8lite/src/char.o

x86_64-conda-linux-gnu-cc -I"/opt/conda/lib/R/include" -DNDEBUG -DNDEBUG -D_FORTIFY_SOURCE=2 -O2 -isystem /opt/conda/include -I/opt/conda/include -Wl,-rpath-link,/opt/conda/lib -Iutf8lite/src -fpic -march=nocona -mtune=haswell -ftree-vectorize -fPIC -fstack-protector-strong -fno-plt -O2 -ffunction-sections -pipe -isystem /opt/conda/include -fdebug-prefix-map=/home/conda/feedstock_root/build_artifacts/r-base-split_1773745610701/work=/usr/local/src/conda/r-base-4.5.3 -fdebug-prefix-map=/opt/conda=/usr/local/src/conda-prefix -c utf8lite/src/encode.c -o utf8lite/src/encode.o

x86_64-conda-linux-gnu-cc -I"/opt/conda/lib/R/include" -DNDEBUG -DNDEBUG -D_FORTIFY_SOURCE=2 -O2 -isystem /opt/conda/include -I/opt/conda/include -Wl,-rpath-link,/opt/conda/lib -Iutf8lite/src -fpic -march=nocona -mtune=haswell -ftree-vectorize -fPIC -fstack-protector-strong -fno-plt -O2 -ffunction-sections -pipe -isystem /opt/conda/include -fdebug-prefix-map=/home/conda/feedstock_root/build_artifacts/r-base-split_1773745610701/work=/usr/local/src/conda/r-base-4.5.3 -fdebug-prefix-map=/opt/conda=/usr/local/src/conda-prefix -c utf8lite/src/error.c -o utf8lite/src/error.o

x86_64-conda-linux-gnu-cc -I"/opt/conda/lib/R/include" -DNDEBUG -DNDEBUG -D_FORTIFY_SOURCE=2 -O2 -isystem /opt/conda/include -I/opt/conda/include -Wl,-rpath-link,/opt/conda/lib -Iutf8lite/src -fpic -march=nocona -mtune=haswell -ftree-vectorize -fPIC -fstack-protector-strong -fno-plt -O2 -ffunction-sections -pipe -isystem /opt/conda/include -fdebug-prefix-map=/home/conda/feedstock_root/build_artifacts/r-base-split_1773745610701/work=/usr/local/src/conda/r-base-4.5.3 -fdebug-prefix-map=/opt/conda=/usr/local/src/conda-prefix -c utf8lite/src/escape.c -o utf8lite/src/escape.o

x86_64-conda-linux-gnu-cc -I"/opt/conda/lib/R/include" -DNDEBUG -DNDEBUG -D_FORTIFY_SOURCE=2 -O2 -isystem /opt/conda/include -I/opt/conda/include -Wl,-rpath-link,/opt/conda/lib -Iutf8lite/src -fpic -march=nocona -mtune=haswell -ftree-vectorize -fPIC -fstack-protector-strong -fno-plt -O2 -ffunction-sections -pipe -isystem /opt/conda/include -fdebug-prefix-map=/home/conda/feedstock_root/build_artifacts/r-base-split_1773745610701/work=/usr/local/src/conda/r-base-4.5.3 -fdebug-prefix-map=/opt/conda=/usr/local/src/conda-prefix -c utf8lite/src/graph.c -o utf8lite/src/graph.o

x86_64-conda-linux-gnu-cc -I"/opt/conda/lib/R/include" -DNDEBUG -DNDEBUG -D_FORTIFY_SOURCE=2 -O2 -isystem /opt/conda/include -I/opt/conda/include -Wl,-rpath-link,/opt/conda/lib -Iutf8lite/src -fpic -march=nocona -mtune=haswell -ftree-vectorize -fPIC -fstack-protector-strong -fno-plt -O2 -ffunction-sections -pipe -isystem /opt/conda/include -fdebug-prefix-map=/home/conda/feedstock_root/build_artifacts/r-base-split_1773745610701/work=/usr/local/src/conda/r-base-4.5.3 -fdebug-prefix-map=/opt/conda=/usr/local/src/conda-prefix -c utf8lite/src/graphscan.c -o utf8lite/src/graphscan.o

x86_64-conda-linux-gnu-cc -I"/opt/conda/lib/R/include" -DNDEBUG -DNDEBUG -D_FORTIFY_SOURCE=2 -O2 -isystem /opt/conda/include -I/opt/conda/include -Wl,-rpath-link,/opt/conda/lib -Iutf8lite/src -fpic -march=nocona -mtune=haswell -ftree-vectorize -fPIC -fstack-protector-strong -fno-plt -O2 -ffunction-sections -pipe -isystem /opt/conda/include -fdebug-prefix-map=/home/conda/feedstock_root/build_artifacts/r-base-split_1773745610701/work=/usr/local/src/conda/r-base-4.5.3 -fdebug-prefix-map=/opt/conda=/usr/local/src/conda-prefix -c utf8lite/src/normalize.c -o utf8lite/src/normalize.o

x86_64-conda-linux-gnu-cc -I"/opt/conda/lib/R/include" -DNDEBUG -DNDEBUG -D_FORTIFY_SOURCE=2 -O2 -isystem /opt/conda/include -I/opt/conda/include -Wl,-rpath-link,/opt/conda/lib -Iutf8lite/src -fpic -march=nocona -mtune=haswell -ftree-vectorize -fPIC -fstack-protector-strong -fno-plt -O2 -ffunction-sections -pipe -isystem /opt/conda/include -fdebug-prefix-map=/home/conda/feedstock_root/build_artifacts/r-base-split_1773745610701/work=/usr/local/src/conda/r-base-4.5.3 -fdebug-prefix-map=/opt/conda=/usr/local/src/conda-prefix -c utf8lite/src/render.c -o utf8lite/src/render.o

x86_64-conda-linux-gnu-cc -I"/opt/conda/lib/R/include" -DNDEBUG -DNDEBUG -D_FORTIFY_SOURCE=2 -O2 -isystem /opt/conda/include -I/opt/conda/include -Wl,-rpath-link,/opt/conda/lib -Iutf8lite/src -fpic -march=nocona -mtune=haswell -ftree-vectorize -fPIC -fstack-protector-strong -fno-plt -O2 -ffunction-sections -pipe -isystem /opt/conda/include -fdebug-prefix-map=/home/conda/feedstock_root/build_artifacts/r-base-split_1773745610701/work=/usr/local/src/conda/r-base-4.5.3 -fdebug-prefix-map=/opt/conda=/usr/local/src/conda-prefix -c utf8lite/src/text.c -o utf8lite/src/text.o

x86_64-conda-linux-gnu-cc -I"/opt/conda/lib/R/include" -DNDEBUG -DNDEBUG -D_FORTIFY_SOURCE=2 -O2 -isystem /opt/conda/include -I/opt/conda/include -Wl,-rpath-link,/opt/conda/lib -Iutf8lite/src -fpic -march=nocona -mtune=haswell -ftree-vectorize -fPIC -fstack-protector-strong -fno-plt -O2 -ffunction-sections -pipe -isystem /opt/conda/include -fdebug-prefix-map=/home/conda/feedstock_root/build_artifacts/r-base-split_1773745610701/work=/usr/local/src/conda/r-base-4.5.3 -fdebug-prefix-map=/opt/conda=/usr/local/src/conda-prefix -c utf8lite/src/textassign.c -o utf8lite/src/textassign.o

x86_64-conda-linux-gnu-cc -I"/opt/conda/lib/R/include" -DNDEBUG -DNDEBUG -D_FORTIFY_SOURCE=2 -O2 -isystem /opt/conda/include -I/opt/conda/include -Wl,-rpath-link,/opt/conda/lib -Iutf8lite/src -fpic -march=nocona -mtune=haswell -ftree-vectorize -fPIC -fstack-protector-strong -fno-plt -O2 -ffunction-sections -pipe -isystem /opt/conda/include -fdebug-prefix-map=/home/conda/feedstock_root/build_artifacts/r-base-split_1773745610701/work=/usr/local/src/conda/r-base-4.5.3 -fdebug-prefix-map=/opt/conda=/usr/local/src/conda-prefix -c utf8lite/src/textiter.c -o utf8lite/src/textiter.o

x86_64-conda-linux-gnu-cc -I"/opt/conda/lib/R/include" -DNDEBUG -DNDEBUG -D_FORTIFY_SOURCE=2 -O2 -isystem /opt/conda/include -I/opt/conda/include -Wl,-rpath-link,/opt/conda/lib -Iutf8lite/src -fpic -march=nocona -mtune=haswell -ftree-vectorize -fPIC -fstack-protector-strong -fno-plt -O2 -ffunction-sections -pipe -isystem /opt/conda/include -fdebug-prefix-map=/home/conda/feedstock_root/build_artifacts/r-base-split_1773745610701/work=/usr/local/src/conda/r-base-4.5.3 -fdebug-prefix-map=/opt/conda=/usr/local/src/conda-prefix -c utf8lite/src/textmap.c -o utf8lite/src/textmap.o

x86_64-conda-linux-gnu-ar rcs libcutf8lite.a utf8lite/src/array.o utf8lite/src/char.o utf8lite/src/encode.o utf8lite/src/error.o utf8lite/src/escape.o utf8lite/src/graph.o utf8lite/src/graphscan.o utf8lite/src/normalize.o utf8lite/src/render.o utf8lite/src/text.o utf8lite/src/textassign.o utf8lite/src/textiter.o utf8lite/src/textmap.o

x86_64-conda-linux-gnu-cc -shared -L/opt/conda/lib/R/lib -Wl,-O2 -Wl,--sort-common -Wl,--as-needed -Wl,-z,relro -Wl,-z,now -Wl,--disable-new-dtags -Wl,--gc-sections -Wl,--allow-shlib-undefined -Wl,-rpath,/opt/conda/lib -Wl,-rpath-link,/opt/conda/lib -L/opt/conda/lib -o utf8.so as_utf8.o bytes.o context.o init.o render.o render_table.o string.o text.o utf8_encode.o utf8_format.o utf8_normalize.o utf8_valid.o utf8_width.o util.o -L. -lcutf8lite -L/opt/conda/lib/R/lib -lR

checking for R_HOME... /opt/conda/lib/R

checking for R... /opt/conda/lib/R/bin/R

checking for endianness...

little

checking for cat... /usr/bin/cat

checking whether the C++ compiler works... yes

checking for C++ compiler default output file name... a.out

checking for suffix of executables...

checking whether we are cross compiling...

no

checking for suffix of object files... o

checking whether the compiler supports GNU C++... yes

checking whether x86_64-conda-linux-gnu-c++ -std=gnu++17 accepts -g...

yes

checking for x86_64-conda-linux-gnu-c++ -std=gnu++17 option to enable C++11 features...

none needed

checking whether the C++ compiler supports the 'long long' type...

yes

checking whether the compiler implements namespaces... yes

checking whether the compiler supports the Standard Template Library...

yes

checking whether std::map is available...

yes

checking for pkg-config... /usr/bin/pkg-config

checking with 'pkg-config' for the system ICU4C (icu-i18n icu-uc)... 74.2

checking for ICU4C >= 61... yes

checking for additional CPPFLAGS, LDFLAGS, and LIBS required... done

checking whether an ICU4C-based project can be built...

no

*** This version of ICU4C cannot be used.

*** Using the ICU 74 bundle that comes with 'stringi'.

checking whether we may compile src/icu74/common/putil.cpp...

yes

checking whether we may compile src/icu74/i18n/number_affixutils.cpp...

yes

checking whether alignof(std::max_align_t) is available...

yes

checking for stdio.h... yes

checking for stdlib.h... yes

checking for string.h...

yes

checking for inttypes.h... yes

checking for stdint.h... yes

checking for strings.h...

yes

checking for sys/stat.h... yes

checking for sys/types.h...

yes

checking for unistd.h... yes

checking for elf.h... yes

checking whether the ICU data library is available...

configure: creating ./config.status

config.status: creating src/Makevars

config.status: creating src/uconfig_local.h

config.status: creating src/install.libs.R

*** stringi configure summary:

ICU_FOUND=0

ICUDT_DIR=icu74/data

ICU_BUNDLE_VERSION=74

ICUDT_ENDIANNESS=little

STRINGI_CXXSTD=

STRINGI_CXXFLAGS= -fpic

STRINGI_CPPFLAGS=-I. -UDEBUG -DNDEBUG -DU_HAVE_ELF_H -Iicu74 -Iicu74/unicode -Iicu74/common -Iicu74/i18n -DU_STATIC_IMPLEMENTATION -DU_COMMON_IMPLEMENTATION -DU_I18N_IMPLEMENTATION -D_REENTRANT -DUCONFIG_USE_LOCAL

STRINGI_LDFLAGS=

STRINGI_LIBS=

*** Compiler settings used:

CXX=x86_64-conda-linux-gnu-c++ -std=gnu++17

LD=x86_64-conda-linux-gnu-c++ -std=gnu++17

CXXFLAGS=-fvisibility-inlines-hidden -fmessage-length=0 -march=nocona -mtune=haswell -ftree-vectorize -fPIC -fstack-protector-strong -fno-plt -O2 -ffunction-sections -pipe -isystem /opt/conda/include -fdebug-prefix-map=/home/conda/feedstock_root/build_artifacts/r-base-split_1773745610701/work=/usr/local/src/conda/r-base-4.5.3 -fdebug-prefix-map=/opt/conda=/usr/local/src/conda-prefix

CPPFLAGS=-DNDEBUG -D_FORTIFY_SOURCE=2 -O2 -isystem /opt/conda/include -I/opt/conda/include -Wl,-rpath-link,/opt/conda/lib

LDFLAGS=

LIBS=

x86_64-conda-linux-gnu-c++ -std=gnu++17 -I"/opt/conda/lib/R/include" -DNDEBUG -I. -UDEBUG -DNDEBUG -DU_HAVE_ELF_H -Iicu74 -Iicu74/unicode -Iicu74/common -Iicu74/i18n -DU_STATIC_IMPLEMENTATION -DU_COMMON_IMPLEMENTATION -DU_I18N_IMPLEMENTATION -D_REENTRANT -DUCONFIG_USE_LOCAL -DNDEBUG -D_FORTIFY_SOURCE=2 -O2 -isystem /opt/conda/include -I/opt/conda/include -Wl,-rpath-link,/opt/conda/lib -fpic -fpic -fvisibility-inlines-hidden -fmessage-length=0 -march=nocona -mtune=haswell -ftree-vectorize -fPIC -fstack-protector-strong -fno-plt -O2 -ffunction-sections -pipe -isystem /opt/conda/include -fdebug-prefix-map=/home/conda/feedstock_root/build_artifacts/r-base-split_1773745610701/work=/usr/local/src/conda/r-base-4.5.3 -fdebug-prefix-map=/opt/conda=/usr/local/src/conda-prefix -c stri_brkiter.cpp -o stri_brkiter.o

x86_64-conda-linux-gnu-c++ -std=gnu++17 -I"/opt/conda/lib/R/include" -DNDEBUG -I. -UDEBUG -DNDEBUG -DU_HAVE_ELF_H -Iicu74 -Iicu74/unicode -Iicu74/common -Iicu74/i18n -DU_STATIC_IMPLEMENTATION -DU_COMMON_IMPLEMENTATION -DU_I18N_IMPLEMENTATION -D_REENTRANT -DUCONFIG_USE_LOCAL -DNDEBUG -D_FORTIFY_SOURCE=2 -O2 -isystem /opt/conda/include -I/opt/conda/include -Wl,-rpath-link,/opt/conda/lib -fpic -fpic -fvisibility-inlines-hidden -fmessage-length=0 -march=nocona -mtune=haswell -ftree-vectorize -fPIC -fstack-protector-strong -fno-plt -O2 -ffunction-sections -pipe -isystem /opt/conda/include -fdebug-prefix-map=/home/conda/feedstock_root/build_artifacts/r-base-split_1773745610701/work=/usr/local/src/conda/r-base-4.5.3 -fdebug-prefix-map=/opt/conda=/usr/local/src/conda-prefix -c stri_callables.cpp -o stri_callables.o

x86_64-conda-linux-gnu-c++ -std=gnu++17 -I"/opt/conda/lib/R/include" -DNDEBUG -I. -UDEBUG -DNDEBUG -DU_HAVE_ELF_H -Iicu74 -Iicu74/unicode -Iicu74/common -Iicu74/i18n -DU_STATIC_IMPLEMENTATION -DU_COMMON_IMPLEMENTATION -DU_I18N_IMPLEMENTATION -D_REENTRANT -DUCONFIG_USE_LOCAL -DNDEBUG -D_FORTIFY_SOURCE=2 -O2 -isystem /opt/conda/include -I/opt/conda/include -Wl,-rpath-link,/opt/conda/lib -fpic -fpic -fvisibility-inlines-hidden -fmessage-length=0 -march=nocona -mtune=haswell -ftree-vectorize -fPIC -fstack-protector-strong -fno-plt -O2 -ffunction-sections -pipe -isystem /opt/conda/include -fdebug-prefix-map=/home/conda/feedstock_root/build_artifacts/r-base-split_1773745610701/work=/usr/local/src/conda/r-base-4.5.3 -fdebug-prefix-map=/opt/conda=/usr/local/src/conda-prefix -c stri_collator.cpp -o stri_collator.o

x86_64-conda-linux-gnu-c++ -std=gnu++17 -I"/opt/conda/lib/R/include" -DNDEBUG -I. -UDEBUG -DNDEBUG -DU_HAVE_ELF_H -Iicu74 -Iicu74/unicode -Iicu74/common -Iicu74/i18n -DU_STATIC_IMPLEMENTATION -DU_COMMON_IMPLEMENTATION -DU_I18N_IMPLEMENTATION -D_REENTRANT -DUCONFIG_USE_LOCAL -DNDEBUG -D_FORTIFY_SOURCE=2 -O2 -isystem /opt/conda/include -I/opt/conda/include -Wl,-rpath-link,/opt/conda/lib -fpic -fpic -fvisibility-inlines-hidden -fmessage-length=0 -march=nocona -mtune=haswell -ftree-vectorize -fPIC -fstack-protector-strong -fno-plt -O2 -ffunction-sections -pipe -isystem /opt/conda/include -fdebug-prefix-map=/home/conda/feedstock_root/build_artifacts/r-base-split_1773745610701/work=/usr/local/src/conda/r-base-4.5.3 -fdebug-prefix-map=/opt/conda=/usr/local/src/conda-prefix -c stri_common.cpp -o stri_common.o

x86_64-conda-linux-gnu-c++ -std=gnu++17 -I"/opt/conda/lib/R/include" -DNDEBUG -I. -UDEBUG -DNDEBUG -DU_HAVE_ELF_H -Iicu74 -Iicu74/unicode -Iicu74/common -Iicu74/i18n -DU_STATIC_IMPLEMENTATION -DU_COMMON_IMPLEMENTATION -DU_I18N_IMPLEMENTATION -D_REENTRANT -DUCONFIG_USE_LOCAL -DNDEBUG -D_FORTIFY_SOURCE=2 -O2 -isystem /opt/conda/include -I/opt/conda/include -Wl,-rpath-link,/opt/conda/lib -fpic -fpic -fvisibility-inlines-hidden -fmessage-length=0 -march=nocona -mtune=haswell -ftree-vectorize -fPIC -fstack-protector-strong -fno-plt -O2 -ffunction-sections -pipe -isystem /opt/conda/include -fdebug-prefix-map=/home/conda/feedstock_root/build_artifacts/r-base-split_1773745610701/work=/usr/local/src/conda/r-base-4.5.3 -fdebug-prefix-map=/opt/conda=/usr/local/src/conda-prefix -c stri_compare.cpp -o stri_compare.o

x86_64-conda-linux-gnu-c++ -std=gnu++17 -I"/opt/conda/lib/R/include" -DNDEBUG -I. -UDEBUG -DNDEBUG -DU_HAVE_ELF_H -Iicu74 -Iicu74/unicode -Iicu74/common -Iicu74/i18n -DU_STATIC_IMPLEMENTATION -DU_COMMON_IMPLEMENTATION -DU_I18N_IMPLEMENTATION -D_REENTRANT -DUCONFIG_USE_LOCAL -DNDEBUG -D_FORTIFY_SOURCE=2 -O2 -isystem /opt/conda/include -I/opt/conda/include -Wl,-rpath-link,/opt/conda/lib -fpic -fpic -fvisibility-inlines-hidden -fmessage-length=0 -march=nocona -mtune=haswell -ftree-vectorize -fPIC -fstack-protector-strong -fno-plt -O2 -ffunction-sections -pipe -isystem /opt/conda/include -fdebug-prefix-map=/home/conda/feedstock_root/build_artifacts/r-base-split_1773745610701/work=/usr/local/src/conda/r-base-4.5.3 -fdebug-prefix-map=/opt/conda=/usr/local/src/conda-prefix -c stri_container_base.cpp -o stri_container_base.o

x86_64-conda-linux-gnu-c++ -std=gnu++17 -I"/opt/conda/lib/R/include" -DNDEBUG -I. -UDEBUG -DNDEBUG -DU_HAVE_ELF_H -Iicu74 -Iicu74/unicode -Iicu74/common -Iicu74/i18n -DU_STATIC_IMPLEMENTATION -DU_COMMON_IMPLEMENTATION -DU_I18N_IMPLEMENTATION -D_REENTRANT -DUCONFIG_USE_LOCAL -DNDEBUG -D_FORTIFY_SOURCE=2 -O2 -isystem /opt/conda/include -I/opt/conda/include -Wl,-rpath-link,/opt/conda/lib -fpic -fpic -fvisibility-inlines-hidden -fmessage-length=0 -march=nocona -mtune=haswell -ftree-vectorize -fPIC -fstack-protector-strong -fno-plt -O2 -ffunction-sections -pipe -isystem /opt/conda/include -fdebug-prefix-map=/home/conda/feedstock_root/build_artifacts/r-base-split_1773745610701/work=/usr/local/src/conda/r-base-4.5.3 -fdebug-prefix-map=/opt/conda=/usr/local/src/conda-prefix -c stri_container_bytesearch.cpp -o stri_container_bytesearch.o

x86_64-conda-linux-gnu-c++ -std=gnu++17 -I"/opt/conda/lib/R/include" -DNDEBUG -I. -UDEBUG -DNDEBUG -DU_HAVE_ELF_H -Iicu74 -Iicu74/unicode -Iicu74/common -Iicu74/i18n -DU_STATIC_IMPLEMENTATION -DU_COMMON_IMPLEMENTATION -DU_I18N_IMPLEMENTATION -D_REENTRANT -DUCONFIG_USE_LOCAL -DNDEBUG -D_FORTIFY_SOURCE=2 -O2 -isystem /opt/conda/include -I/opt/conda/include -Wl,-rpath-link,/opt/conda/lib -fpic -fpic -fvisibility-inlines-hidden -fmessage-length=0 -march=nocona -mtune=haswell -ftree-vectorize -fPIC -fstack-protector-strong -fno-plt -O2 -ffunction-sections -pipe -isystem /opt/conda/include -fdebug-prefix-map=/home/conda/feedstock_root/build_artifacts/r-base-split_1773745610701/work=/usr/local/src/conda/r-base-4.5.3 -fdebug-prefix-map=/opt/conda=/usr/local/src/conda-prefix -c stri_container_listint.cpp -o stri_container_listint.o

x86_64-conda-linux-gnu-c++ -std=gnu++17 -I"/opt/conda/lib/R/include" -DNDEBUG -I. -UDEBUG -DNDEBUG -DU_HAVE_ELF_H -Iicu74 -Iicu74/unicode -Iicu74/common -Iicu74/i18n -DU_STATIC_IMPLEMENTATION -DU_COMMON_IMPLEMENTATION -DU_I18N_IMPLEMENTATION -D_REENTRANT -DUCONFIG_USE_LOCAL -DNDEBUG -D_FORTIFY_SOURCE=2 -O2 -isystem /opt/conda/include -I/opt/conda/include -Wl,-rpath-link,/opt/conda/lib -fpic -fpic -fvisibility-inlines-hidden -fmessage-length=0 -march=nocona -mtune=haswell -ftree-vectorize -fPIC -fstack-protector-strong -fno-plt -O2 -ffunction-sections -pipe -isystem /opt/conda/include -fdebug-prefix-map=/home/conda/feedstock_root/build_artifacts/r-base-split_1773745610701/work=/usr/local/src/conda/r-base-4.5.3 -fdebug-prefix-map=/opt/conda=/usr/local/src/conda-prefix -c stri_container_listraw.cpp -o stri_container_listraw.o

x86_64-conda-linux-gnu-c++ -std=gnu++17 -I"/opt/conda/lib/R/include" -DNDEBUG -I. -UDEBUG -DNDEBUG -DU_HAVE_ELF_H -Iicu74 -Iicu74/unicode -Iicu74/common -Iicu74/i18n -DU_STATIC_IMPLEMENTATION -DU_COMMON_IMPLEMENTATION -DU_I18N_IMPLEMENTATION -D_REENTRANT -DUCONFIG_USE_LOCAL -DNDEBUG -D_FORTIFY_SOURCE=2 -O2 -isystem /opt/conda/include -I/opt/conda/include -Wl,-rpath-link,/opt/conda/lib -fpic -fpic -fvisibility-inlines-hidden -fmessage-length=0 -march=nocona -mtune=haswell -ftree-vectorize -fPIC -fstack-protector-strong -fno-plt -O2 -ffunction-sections -pipe -isystem /opt/conda/include -fdebug-prefix-map=/home/conda/feedstock_root/build_artifacts/r-base-split_1773745610701/work=/usr/local/src/conda/r-base-4.5.3 -fdebug-prefix-map=/opt/conda=/usr/local/src/conda-prefix -c stri_container_listutf8.cpp -o stri_container_listutf8.o

x86_64-conda-linux-gnu-c++ -std=gnu++17 -I"/opt/conda/lib/R/include" -DNDEBUG -I. -UDEBUG -DNDEBUG -DU_HAVE_ELF_H -Iicu74 -Iicu74/unicode -Iicu74/common -Iicu74/i18n -DU_STATIC_IMPLEMENTATION -DU_COMMON_IMPLEMENTATION -DU_I18N_IMPLEMENTATION -D_REENTRANT -DUCONFIG_USE_LOCAL -DNDEBUG -D_FORTIFY_SOURCE=2 -O2 -isystem /opt/conda/include -I/opt/conda/include -Wl,-rpath-link,/opt/conda/lib -fpic -fpic -fvisibility-inlines-hidden -fmessage-length=0 -march=nocona -mtune=haswell -ftree-vectorize -fPIC -fstack-protector-strong -fno-plt -O2 -ffunction-sections -pipe -isystem /opt/conda/include -fdebug-prefix-map=/home/conda/feedstock_root/build_artifacts/r-base-split_1773745610701/work=/usr/local/src/conda/r-base-4.5.3 -fdebug-prefix-map=/opt/conda=/usr/local/src/conda-prefix -c stri_container_regex.cpp -o stri_container_regex.o

x86_64-conda-linux-gnu-c++ -std=gnu++17 -I"/opt/conda/lib/R/include" -DNDEBUG -I. -UDEBUG -DNDEBUG -DU_HAVE_ELF_H -Iicu74 -Iicu74/unicode -Iicu74/common -Iicu74/i18n -DU_STATIC_IMPLEMENTATION -DU_COMMON_IMPLEMENTATION -DU_I18N_IMPLEMENTATION -D_REENTRANT -DUCONFIG_USE_LOCAL -DNDEBUG -D_FORTIFY_SOURCE=2 -O2 -isystem /opt/conda/include -I/opt/conda/include -Wl,-rpath-link,/opt/conda/lib -fpic -fpic -fvisibility-inlines-hidden -fmessage-length=0 -march=nocona -mtune=haswell -ftree-vectorize -fPIC -fstack-protector-strong -fno-plt -O2 -ffunction-sections -pipe -isystem /opt/conda/include -fdebug-prefix-map=/home/conda/feedstock_root/build_artifacts/r-base-split_1773745610701/work=/usr/local/src/conda/r-base-4.5.3 -fdebug-prefix-map=/opt/conda=/usr/local/src/conda-prefix -c stri_container_usearch.cpp -o stri_container_usearch.o

x86_64-conda-linux-gnu-c++ -std=gnu++17 -I"/opt/conda/lib/R/include" -DNDEBUG -I. -UDEBUG -DNDEBUG -DU_HAVE_ELF_H -Iicu74 -Iicu74/unicode -Iicu74/common -Iicu74/i18n -DU_STATIC_IMPLEMENTATION -DU_COMMON_IMPLEMENTATION -DU_I18N_IMPLEMENTATION -D_REENTRANT -DUCONFIG_USE_LOCAL -DNDEBUG -D_FORTIFY_SOURCE=2 -O2 -isystem /opt/conda/include -I/opt/conda/include -Wl,-rpath-link,/opt/conda/lib -fpic -fpic -fvisibility-inlines-hidden -fmessage-length=0 -march=nocona -mtune=haswell -ftree-vectorize -fPIC -fstack-protector-strong -fno-plt -O2 -ffunction-sections -pipe -isystem /opt/conda/include -fdebug-prefix-map=/home/conda/feedstock_root/build_artifacts/r-base-split_1773745610701/work=/usr/local/src/conda/r-base-4.5.3 -fdebug-prefix-map=/opt/conda=/usr/local/src/conda-prefix -c stri_container_utf16.cpp -o stri_container_utf16.o

x86_64-conda-linux-gnu-c++ -std=gnu++17 -I"/opt/conda/lib/R/include" -DNDEBUG -I. -UDEBUG -DNDEBUG -DU_HAVE_ELF_H -Iicu74 -Iicu74/unicode -Iicu74/common -Iicu74/i18n -DU_STATIC_IMPLEMENTATION -DU_COMMON_IMPLEMENTATION -DU_I18N_IMPLEMENTATION -D_REENTRANT -DUCONFIG_USE_LOCAL -DNDEBUG -D_FORTIFY_SOURCE=2 -O2 -isystem /opt/conda/include -I/opt/conda/include -Wl,-rpath-link,/opt/conda/lib -fpic -fpic -fvisibility-inlines-hidden -fmessage-length=0 -march=nocona -mtune=haswell -ftree-vectorize -fPIC -fstack-protector-strong -fno-plt -O2 -ffunction-sections -pipe -isystem /opt/conda/include -fdebug-prefix-map=/home/conda/feedstock_root/build_artifacts/r-base-split_1773745610701/work=/usr/local/src/conda/r-base-4.5.3 -fdebug-prefix-map=/opt/conda=/usr/local/src/conda-prefix -c stri_container_utf8.cpp -o stri_container_utf8.o

x86_64-conda-linux-gnu-c++ -std=gnu++17 -I"/opt/conda/lib/R/include" -DNDEBUG -I. -UDEBUG -DNDEBUG -DU_HAVE_ELF_H -Iicu74 -Iicu74/unicode -Iicu74/common -Iicu74/i18n -DU_STATIC_IMPLEMENTATION -DU_COMMON_IMPLEMENTATION -DU_I18N_IMPLEMENTATION -D_REENTRANT -DUCONFIG_USE_LOCAL -DNDEBUG -D_FORTIFY_SOURCE=2 -O2 -isystem /opt/conda/include -I/opt/conda/include -Wl,-rpath-link,/opt/conda/lib -fpic -fpic -fvisibility-inlines-hidden -fmessage-length=0 -march=nocona -mtune=haswell -ftree-vectorize -fPIC -fstack-protector-strong -fno-plt -O2 -ffunction-sections -pipe -isystem /opt/conda/include -fdebug-prefix-map=/home/conda/feedstock_root/build_artifacts/r-base-split_1773745610701/work=/usr/local/src/conda/r-base-4.5.3 -fdebug-prefix-map=/opt/conda=/usr/local/src/conda-prefix -c stri_container_utf8_indexable.cpp -o stri_container_utf8_indexable.o

x86_64-conda-linux-gnu-c++ -std=gnu++17 -I"/opt/conda/lib/R/include" -DNDEBUG -I. -UDEBUG -DNDEBUG -DU_HAVE_ELF_H -Iicu74 -Iicu74/unicode -Iicu74/common -Iicu74/i18n -DU_STATIC_IMPLEMENTATION -DU_COMMON_IMPLEMENTATION -DU_I18N_IMPLEMENTATION -D_REENTRANT -DUCONFIG_USE_LOCAL -DNDEBUG -D_FORTIFY_SOURCE=2 -O2 -isystem /opt/conda/include -I/opt/conda/include -Wl,-rpath-link,/opt/conda/lib -fpic -fpic -fvisibility-inlines-hidden -fmessage-length=0 -march=nocona -mtune=haswell -ftree-vectorize -fPIC -fstack-protector-strong -fno-plt -O2 -ffunction-sections -pipe -isystem /opt/conda/include -fdebug-prefix-map=/home/conda/feedstock_root/build_artifacts/r-base-split_1773745610701/work=/usr/local/src/conda/r-base-4.5.3 -fdebug-prefix-map=/opt/conda=/usr/local/src/conda-prefix -c stri_encoding_conversion.cpp -o stri_encoding_conversion.o

x86_64-conda-linux-gnu-c++ -std=gnu++17 -I"/opt/conda/lib/R/include" -DNDEBUG -I. -UDEBUG -DNDEBUG -DU_HAVE_ELF_H -Iicu74 -Iicu74/unicode -Iicu74/common -Iicu74/i18n -DU_STATIC_IMPLEMENTATION -DU_COMMON_IMPLEMENTATION -DU_I18N_IMPLEMENTATION -D_REENTRANT -DUCONFIG_USE_LOCAL -DNDEBUG -D_FORTIFY_SOURCE=2 -O2 -isystem /opt/conda/include -I/opt/conda/include -Wl,-rpath-link,/opt/conda/lib -fpic -fpic -fvisibility-inlines-hidden -fmessage-length=0 -march=nocona -mtune=haswell -ftree-vectorize -fPIC -fstack-protector-strong -fno-plt -O2 -ffunction-sections -pipe -isystem /opt/conda/include -fdebug-prefix-map=/home/conda/feedstock_root/build_artifacts/r-base-split_1773745610701/work=/usr/local/src/conda/r-base-4.5.3 -fdebug-prefix-map=/opt/conda=/usr/local/src/conda-prefix -c stri_encoding_detection.cpp -o stri_encoding_detection.o

x86_64-conda-linux-gnu-c++ -std=gnu++17 -I"/opt/conda/lib/R/include" -DNDEBUG -I. -UDEBUG -DNDEBUG -DU_HAVE_ELF_H -Iicu74 -Iicu74/unicode -Iicu74/common -Iicu74/i18n -DU_STATIC_IMPLEMENTATION -DU_COMMON_IMPLEMENTATION -DU_I18N_IMPLEMENTATION -D_REENTRANT -DUCONFIG_USE_LOCAL -DNDEBUG -D_FORTIFY_SOURCE=2 -O2 -isystem /opt/conda/include -I/opt/conda/include -Wl,-rpath-link,/opt/conda/lib -fpic -fpic -fvisibility-inlines-hidden -fmessage-length=0 -march=nocona -mtune=haswell -ftree-vectorize -fPIC -fstack-protector-strong -fno-plt -O2 -ffunction-sections -pipe -isystem /opt/conda/include -fdebug-prefix-map=/home/conda/feedstock_root/build_artifacts/r-base-split_1773745610701/work=/usr/local/src/conda/r-base-4.5.3 -fdebug-prefix-map=/opt/conda=/usr/local/src/conda-prefix -c stri_encoding_management.cpp -o stri_encoding_management.o

x86_64-conda-linux-gnu-c++ -std=gnu++17 -I"/opt/conda/lib/R/include" -DNDEBUG -I. -UDEBUG -DNDEBUG -DU_HAVE_ELF_H -Iicu74 -Iicu74/unicode -Iicu74/common -Iicu74/i18n -DU_STATIC_IMPLEMENTATION -DU_COMMON_IMPLEMENTATION -DU_I18N_IMPLEMENTATION -D_REENTRANT -DUCONFIG_USE_LOCAL -DNDEBUG -D_FORTIFY_SOURCE=2 -O2 -isystem /opt/conda/include -I/opt/conda/include -Wl,-rpath-link,/opt/conda/lib -fpic -fpic -fvisibility-inlines-hidden -fmessage-length=0 -march=nocona -mtune=haswell -ftree-vectorize -fPIC -fstack-protector-strong -fno-plt -O2 -ffunction-sections -pipe -isystem /opt/conda/include -fdebug-prefix-map=/home/conda/feedstock_root/build_artifacts/r-base-split_1773745610701/work=/usr/local/src/conda/r-base-4.5.3 -fdebug-prefix-map=/opt/conda=/usr/local/src/conda-prefix -c stri_escape.cpp -o stri_escape.o

x86_64-conda-linux-gnu-c++ -std=gnu++17 -I"/opt/conda/lib/R/include" -DNDEBUG -I. -UDEBUG -DNDEBUG -DU_HAVE_ELF_H -Iicu74 -Iicu74/unicode -Iicu74/common -Iicu74/i18n -DU_STATIC_IMPLEMENTATION -DU_COMMON_IMPLEMENTATION -DU_I18N_IMPLEMENTATION -D_REENTRANT -DUCONFIG_USE_LOCAL -DNDEBUG -D_FORTIFY_SOURCE=2 -O2 -isystem /opt/conda/include -I/opt/conda/include -Wl,-rpath-link,/opt/conda/lib -fpic -fpic -fvisibility-inlines-hidden -fmessage-length=0 -march=nocona -mtune=haswell -ftree-vectorize -fPIC -fstack-protector-strong -fno-plt -O2 -ffunction-sections -pipe -isystem /opt/conda/include -fdebug-prefix-map=/home/conda/feedstock_root/build_artifacts/r-base-split_1773745610701/work=/usr/local/src/conda/r-base-4.5.3 -fdebug-prefix-map=/opt/conda=/usr/local/src/conda-prefix -c stri_exception.cpp -o stri_exception.o

x86_64-conda-linux-gnu-c++ -std=gnu++17 -I"/opt/conda/lib/R/include" -DNDEBUG -I. -UDEBUG -DNDEBUG -DU_HAVE_ELF_H -Iicu74 -Iicu74/unicode -Iicu74/common -Iicu74/i18n -DU_STATIC_IMPLEMENTATION -DU_COMMON_IMPLEMENTATION -DU_I18N_IMPLEMENTATION -D_REENTRANT -DUCONFIG_USE_LOCAL -DNDEBUG -D_FORTIFY_SOURCE=2 -O2 -isystem /opt/conda/include -I/opt/conda/include -Wl,-rpath-link,/opt/conda/lib -fpic -fpic -fvisibility-inlines-hidden -fmessage-length=0 -march=nocona -mtune=haswell -ftree-vectorize -fPIC -fstack-protector-strong -fno-plt -O2 -ffunction-sections -pipe -isystem /opt/conda/include -fdebug-prefix-map=/home/conda/feedstock_root/build_artifacts/r-base-split_1773745610701/work=/usr/local/src/conda/r-base-4.5.3 -fdebug-prefix-map=/opt/conda=/usr/local/src/conda-prefix -c stri_ICU_settings.cpp -o stri_ICU_settings.o

x86_64-conda-linux-gnu-c++ -std=gnu++17 -I"/opt/conda/lib/R/include" -DNDEBUG -I. -UDEBUG -DNDEBUG -DU_HAVE_ELF_H -Iicu74 -Iicu74/unicode -Iicu74/common -Iicu74/i18n -DU_STATIC_IMPLEMENTATION -DU_COMMON_IMPLEMENTATION -DU_I18N_IMPLEMENTATION -D_REENTRANT -DUCONFIG_USE_LOCAL -DNDEBUG -D_FORTIFY_SOURCE=2 -O2 -isystem /opt/conda/include -I/opt/conda/include -Wl,-rpath-link,/opt/conda/lib -fpic -fpic -fvisibility-inlines-hidden -fmessage-length=0 -march=nocona -mtune=haswell -ftree-vectorize -fPIC -fstack-protector-strong -fno-plt -O2 -ffunction-sections -pipe -isystem /opt/conda/include -fdebug-prefix-map=/home/conda/feedstock_root/build_artifacts/r-base-split_1773745610701/work=/usr/local/src/conda/r-base-4.5.3 -fdebug-prefix-map=/opt/conda=/usr/local/src/conda-prefix -c stri_join.cpp -o stri_join.o

x86_64-conda-linux-gnu-c++ -std=gnu++17 -I"/opt/conda/lib/R/include" -DNDEBUG -I. -UDEBUG -DNDEBUG -DU_HAVE_ELF_H -Iicu74 -Iicu74/unicode -Iicu74/common -Iicu74/i18n -DU_STATIC_IMPLEMENTATION -DU_COMMON_IMPLEMENTATION -DU_I18N_IMPLEMENTATION -D_REENTRANT -DUCONFIG_USE_LOCAL -DNDEBUG -D_FORTIFY_SOURCE=2 -O2 -isystem /opt/conda/include -I/opt/conda/include -Wl,-rpath-link,/opt/conda/lib -fpic -fpic -fvisibility-inlines-hidden -fmessage-length=0 -march=nocona -mtune=haswell -ftree-vectorize -fPIC -fstack-protector-strong -fno-plt -O2 -ffunction-sections -pipe -isystem /opt/conda/include -fdebug-prefix-map=/home/conda/feedstock_root/build_artifacts/r-base-split_1773745610701/work=/usr/local/src/conda/r-base-4.5.3 -fdebug-prefix-map=/opt/conda=/usr/local/src/conda-prefix -c stri_length.cpp -o stri_length.o

x86_64-conda-linux-gnu-c++ -std=gnu++17 -I"/opt/conda/lib/R/include" -DNDEBUG -I. -UDEBUG -DNDEBUG -DU_HAVE_ELF_H -Iicu74 -Iicu74/unicode -Iicu74/common -Iicu74/i18n -DU_STATIC_IMPLEMENTATION -DU_COMMON_IMPLEMENTATION -DU_I18N_IMPLEMENTATION -D_REENTRANT -DUCONFIG_USE_LOCAL -DNDEBUG -D_FORTIFY_SOURCE=2 -O2 -isystem /opt/conda/include -I/opt/conda/include -Wl,-rpath-link,/opt/conda/lib -fpic -fpic -fvisibility-inlines-hidden -fmessage-length=0 -march=nocona -mtune=haswell -ftree-vectorize -fPIC -fstack-protector-strong -fno-plt -O2 -ffunction-sections -pipe -isystem /opt/conda/include -fdebug-prefix-map=/home/conda/feedstock_root/build_artifacts/r-base-split_1773745610701/work=/usr/local/src/conda/r-base-4.5.3 -fdebug-prefix-map=/opt/conda=/usr/local/src/conda-prefix -c stri_pad.cpp -o stri_pad.o

x86_64-conda-linux-gnu-c++ -std=gnu++17 -I"/opt/conda/lib/R/include" -DNDEBUG -I. -UDEBUG -DNDEBUG -DU_HAVE_ELF_H -Iicu74 -Iicu74/unicode -Iicu74/common -Iicu74/i18n -DU_STATIC_IMPLEMENTATION -DU_COMMON_IMPLEMENTATION -DU_I18N_IMPLEMENTATION -D_REENTRANT -DUCONFIG_USE_LOCAL -DNDEBUG -D_FORTIFY_SOURCE=2 -O2 -isystem /opt/conda/include -I/opt/conda/include -Wl,-rpath-link,/opt/conda/lib -fpic -fpic -fvisibility-inlines-hidden -fmessage-length=0 -march=nocona -mtune=haswell -ftree-vectorize -fPIC -fstack-protector-strong -fno-plt -O2 -ffunction-sections -pipe -isystem /opt/conda/include -fdebug-prefix-map=/home/conda/feedstock_root/build_artifacts/r-base-split_1773745610701/work=/usr/local/src/conda/r-base-4.5.3 -fdebug-prefix-map=/opt/conda=/usr/local/src/conda-prefix -c stri_prepare_arg.cpp -o stri_prepare_arg.o

x86_64-conda-linux-gnu-c++ -std=gnu++17 -I"/opt/conda/lib/R/include" -DNDEBUG -I. -UDEBUG -DNDEBUG -DU_HAVE_ELF_H -Iicu74 -Iicu74/unicode -Iicu74/common -Iicu74/i18n -DU_STATIC_IMPLEMENTATION -DU_COMMON_IMPLEMENTATION -DU_I18N_IMPLEMENTATION -D_REENTRANT -DUCONFIG_USE_LOCAL -DNDEBUG -D_FORTIFY_SOURCE=2 -O2 -isystem /opt/conda/include -I/opt/conda/include -Wl,-rpath-link,/opt/conda/lib -fpic -fpic -fvisibility-inlines-hidden -fmessage-length=0 -march=nocona -mtune=haswell -ftree-vectorize -fPIC -fstack-protector-strong -fno-plt -O2 -ffunction-sections -pipe -isystem /opt/conda/include -fdebug-prefix-map=/home/conda/feedstock_root/build_artifacts/r-base-split_1773745610701/work=/usr/local/src/conda/r-base-4.5.3 -fdebug-prefix-map=/opt/conda=/usr/local/src/conda-prefix -c stri_random.cpp -o stri_random.o

x86_64-conda-linux-gnu-c++ -std=gnu++17 -I"/opt/conda/lib/R/include" -DNDEBUG -I. -UDEBUG -DNDEBUG -DU_HAVE_ELF_H -Iicu74 -Iicu74/unicode -Iicu74/common -Iicu74/i18n -DU_STATIC_IMPLEMENTATION -DU_COMMON_IMPLEMENTATION -DU_I18N_IMPLEMENTATION -D_REENTRANT -DUCONFIG_USE_LOCAL -DNDEBUG -D_FORTIFY_SOURCE=2 -O2 -isystem /opt/conda/include -I/opt/conda/include -Wl,-rpath-link,/opt/conda/lib -fpic -fpic -fvisibility-inlines-hidden -fmessage-length=0 -march=nocona -mtune=haswell -ftree-vectorize -fPIC -fstack-protector-strong -fno-plt -O2 -ffunction-sections -pipe -isystem /opt/conda/include -fdebug-prefix-map=/home/conda/feedstock_root/build_artifacts/r-base-split_1773745610701/work=/usr/local/src/conda/r-base-4.5.3 -fdebug-prefix-map=/opt/conda=/usr/local/src/conda-prefix -c stri_reverse.cpp -o stri_reverse.o

x86_64-conda-linux-gnu-c++ -std=gnu++17 -I"/opt/conda/lib/R/include" -DNDEBUG -I. -UDEBUG -DNDEBUG -DU_HAVE_ELF_H -Iicu74 -Iicu74/unicode -Iicu74/common -Iicu74/i18n -DU_STATIC_IMPLEMENTATION -DU_COMMON_IMPLEMENTATION -DU_I18N_IMPLEMENTATION -D_REENTRANT -DUCONFIG_USE_LOCAL -DNDEBUG -D_FORTIFY_SOURCE=2 -O2 -isystem /opt/conda/include -I/opt/conda/include -Wl,-rpath-link,/opt/conda/lib -fpic -fpic -fvisibility-inlines-hidden -fmessage-length=0 -march=nocona -mtune=haswell -ftree-vectorize -fPIC -fstack-protector-strong -fno-plt -O2 -ffunction-sections -pipe -isystem /opt/conda/include -fdebug-prefix-map=/home/conda/feedstock_root/build_artifacts/r-base-split_1773745610701/work=/usr/local/src/conda/r-base-4.5.3 -fdebug-prefix-map=/opt/conda=/usr/local/src/conda-prefix -c stri_search_class_count.cpp -o stri_search_class_count.o

x86_64-conda-linux-gnu-c++ -std=gnu++17 -I"/opt/conda/lib/R/include" -DNDEBUG -I. -UDEBUG -DNDEBUG -DU_HAVE_ELF_H -Iicu74 -Iicu74/unicode -Iicu74/common -Iicu74/i18n -DU_STATIC_IMPLEMENTATION -DU_COMMON_IMPLEMENTATION -DU_I18N_IMPLEMENTATION -D_REENTRANT -DUCONFIG_USE_LOCAL -DNDEBUG -D_FORTIFY_SOURCE=2 -O2 -isystem /opt/conda/include -I/opt/conda/include -Wl,-rpath-link,/opt/conda/lib -fpic -fpic -fvisibility-inlines-hidden -fmessage-length=0 -march=nocona -mtune=haswell -ftree-vectorize -fPIC -fstack-protector-strong -fno-plt -O2 -ffunction-sections -pipe -isystem /opt/conda/include -fdebug-prefix-map=/home/conda/feedstock_root/build_artifacts/r-base-split_1773745610701/work=/usr/local/src/conda/r-base-4.5.3 -fdebug-prefix-map=/opt/conda=/usr/local/src/conda-prefix -c stri_search_class_detect.cpp -o stri_search_class_detect.o

x86_64-conda-linux-gnu-c++ -std=gnu++17 -I"/opt/conda/lib/R/include" -DNDEBUG -I. -UDEBUG -DNDEBUG -DU_HAVE_ELF_H -Iicu74 -Iicu74/unicode -Iicu74/common -Iicu74/i18n -DU_STATIC_IMPLEMENTATION -DU_COMMON_IMPLEMENTATION -DU_I18N_IMPLEMENTATION -D_REENTRANT -DUCONFIG_USE_LOCAL -DNDEBUG -D_FORTIFY_SOURCE=2 -O2 -isystem /opt/conda/include -I/opt/conda/include -Wl,-rpath-link,/opt/conda/lib -fpic -fpic -fvisibility-inlines-hidden -fmessage-length=0 -march=nocona -mtune=haswell -ftree-vectorize -fPIC -fstack-protector-strong -fno-plt -O2 -ffunction-sections -pipe -isystem /opt/conda/include -fdebug-prefix-map=/home/conda/feedstock_root/build_artifacts/r-base-split_1773745610701/work=/usr/local/src/conda/r-base-4.5.3 -fdebug-prefix-map=/opt/conda=/usr/local/src/conda-prefix -c stri_search_class_extract.cpp -o stri_search_class_extract.o

x86_64-conda-linux-gnu-c++ -std=gnu++17 -I"/opt/conda/lib/R/include" -DNDEBUG -I. -UDEBUG -DNDEBUG -DU_HAVE_ELF_H -Iicu74 -Iicu74/unicode -Iicu74/common -Iicu74/i18n -DU_STATIC_IMPLEMENTATION -DU_COMMON_IMPLEMENTATION -DU_I18N_IMPLEMENTATION -D_REENTRANT -DUCONFIG_USE_LOCAL -DNDEBUG -D_FORTIFY_SOURCE=2 -O2 -isystem /opt/conda/include -I/opt/conda/include -Wl,-rpath-link,/opt/conda/lib -fpic -fpic -fvisibility-inlines-hidden -fmessage-length=0 -march=nocona -mtune=haswell -ftree-vectorize -fPIC -fstack-protector-strong -fno-plt -O2 -ffunction-sections -pipe -isystem /opt/conda/include -fdebug-prefix-map=/home/conda/feedstock_root/build_artifacts/r-base-split_1773745610701/work=/usr/local/src/conda/r-base-4.5.3 -fdebug-prefix-map=/opt/conda=/usr/local/src/conda-prefix -c stri_search_class_locate.cpp -o stri_search_class_locate.o

x86_64-conda-linux-gnu-c++ -std=gnu++17 -I"/opt/conda/lib/R/include" -DNDEBUG -I. -UDEBUG -DNDEBUG -DU_HAVE_ELF_H -Iicu74 -Iicu74/unicode -Iicu74/common -Iicu74/i18n -DU_STATIC_IMPLEMENTATION -DU_COMMON_IMPLEMENTATION -DU_I18N_IMPLEMENTATION -D_REENTRANT -DUCONFIG_USE_LOCAL -DNDEBUG -D_FORTIFY_SOURCE=2 -O2 -isystem /opt/conda/include -I/opt/conda/include -Wl,-rpath-link,/opt/conda/lib -fpic -fpic -fvisibility-inlines-hidden -fmessage-length=0 -march=nocona -mtune=haswell -ftree-vectorize -fPIC -fstack-protector-strong -fno-plt -O2 -ffunction-sections -pipe -isystem /opt/conda/include -fdebug-prefix-map=/home/conda/feedstock_root/build_artifacts/r-base-split_1773745610701/work=/usr/local/src/conda/r-base-4.5.3 -fdebug-prefix-map=/opt/conda=/usr/local/src/conda-prefix -c stri_search_class_replace.cpp -o stri_search_class_replace.o

x86_64-conda-linux-gnu-c++ -std=gnu++17 -I"/opt/conda/lib/R/include" -DNDEBUG -I. -UDEBUG -DNDEBUG -DU_HAVE_ELF_H -Iicu74 -Iicu74/unicode -Iicu74/common -Iicu74/i18n -DU_STATIC_IMPLEMENTATION -DU_COMMON_IMPLEMENTATION -DU_I18N_IMPLEMENTATION -D_REENTRANT -DUCONFIG_USE_LOCAL -DNDEBUG -D_FORTIFY_SOURCE=2 -O2 -isystem /opt/conda/include -I/opt/conda/include -Wl,-rpath-link,/opt/conda/lib -fpic -fpic -fvisibility-inlines-hidden -fmessage-length=0 -march=nocona -mtune=haswell -ftree-vectorize -fPIC -fstack-protector-strong -fno-plt -O2 -ffunction-sections -pipe -isystem /opt/conda/include -fdebug-prefix-map=/home/conda/feedstock_root/build_artifacts/r-base-split_1773745610701/work=/usr/local/src/conda/r-base-4.5.3 -fdebug-prefix-map=/opt/conda=/usr/local/src/conda-prefix -c stri_search_class_split.cpp -o stri_search_class_split.o

x86_64-conda-linux-gnu-c++ -std=gnu++17 -I"/opt/conda/lib/R/include" -DNDEBUG -I. -UDEBUG -DNDEBUG -DU_HAVE_ELF_H -Iicu74 -Iicu74/unicode -Iicu74/common -Iicu74/i18n -DU_STATIC_IMPLEMENTATION -DU_COMMON_IMPLEMENTATION -DU_I18N_IMPLEMENTATION -D_REENTRANT -DUCONFIG_USE_LOCAL -DNDEBUG -D_FORTIFY_SOURCE=2 -O2 -isystem /opt/conda/include -I/opt/conda/include -Wl,-rpath-link,/opt/conda/lib -fpic -fpic -fvisibility-inlines-hidden -fmessage-length=0 -march=nocona -mtune=haswell -ftree-vectorize -fPIC -fstack-protector-strong -fno-plt -O2 -ffunction-sections -pipe -isystem /opt/conda/include -fdebug-prefix-map=/home/conda/feedstock_root/build_artifacts/r-base-split_1773745610701/work=/usr/local/src/conda/r-base-4.5.3 -fdebug-prefix-map=/opt/conda=/usr/local/src/conda-prefix -c stri_search_class_startsendswith.cpp -o stri_search_class_startsendswith.o

x86_64-conda-linux-gnu-c++ -std=gnu++17 -I"/opt/conda/lib/R/include" -DNDEBUG -I. -UDEBUG -DNDEBUG -DU_HAVE_ELF_H -Iicu74 -Iicu74/unicode -Iicu74/common -Iicu74/i18n -DU_STATIC_IMPLEMENTATION -DU_COMMON_IMPLEMENTATION -DU_I18N_IMPLEMENTATION -D_REENTRANT -DUCONFIG_USE_LOCAL -DNDEBUG -D_FORTIFY_SOURCE=2 -O2 -isystem /opt/conda/include -I/opt/conda/include -Wl,-rpath-link,/opt/conda/lib -fpic -fpic -fvisibility-inlines-hidden -fmessage-length=0 -march=nocona -mtune=haswell -ftree-vectorize -fPIC -fstack-protector-strong -fno-plt -O2 -ffunction-sections -pipe -isystem /opt/conda/include -fdebug-prefix-map=/home/conda/feedstock_root/build_artifacts/r-base-split_1773745610701/work=/usr/local/src/conda/r-base-4.5.3 -fdebug-prefix-map=/opt/conda=/usr/local/src/conda-prefix -c stri_search_class_subset.cpp -o stri_search_class_subset.o

x86_64-conda-linux-gnu-c++ -std=gnu++17 -I"/opt/conda/lib/R/include" -DNDEBUG -I. -UDEBUG -DNDEBUG -DU_HAVE_ELF_H -Iicu74 -Iicu74/unicode -Iicu74/common -Iicu74/i18n -DU_STATIC_IMPLEMENTATION -DU_COMMON_IMPLEMENTATION -DU_I18N_IMPLEMENTATION -D_REENTRANT -DUCONFIG_USE_LOCAL -DNDEBUG -D_FORTIFY_SOURCE=2 -O2 -isystem /opt/conda/include -I/opt/conda/include -Wl,-rpath-link,/opt/conda/lib -fpic -fpic -fvisibility-inlines-hidden -fmessage-length=0 -march=nocona -mtune=haswell -ftree-vectorize -fPIC -fstack-protector-strong -fno-plt -O2 -ffunction-sections -pipe -isystem /opt/conda/include -fdebug-prefix-map=/home/conda/feedstock_root/build_artifacts/r-base-split_1773745610701/work=/usr/local/src/conda/r-base-4.5.3 -fdebug-prefix-map=/opt/conda=/usr/local/src/conda-prefix -c stri_search_class_trim.cpp -o stri_search_class_trim.o

x86_64-conda-linux-gnu-c++ -std=gnu++17 -I"/opt/conda/lib/R/include" -DNDEBUG -I. -UDEBUG -DNDEBUG -DU_HAVE_ELF_H -Iicu74 -Iicu74/unicode -Iicu74/common -Iicu74/i18n -DU_STATIC_IMPLEMENTATION -DU_COMMON_IMPLEMENTATION -DU_I18N_IMPLEMENTATION -D_REENTRANT -DUCONFIG_USE_LOCAL -DNDEBUG -D_FORTIFY_SOURCE=2 -O2 -isystem /opt/conda/include -I/opt/conda/include -Wl,-rpath-link,/opt/conda/lib -fpic -fpic -fvisibility-inlines-hidden -fmessage-length=0 -march=nocona -mtune=haswell -ftree-vectorize -fPIC -fstack-protector-strong -fno-plt -O2 -ffunction-sections -pipe -isystem /opt/conda/include -fdebug-prefix-map=/home/conda/feedstock_root/build_artifacts/r-base-split_1773745610701/work=/usr/local/src/conda/r-base-4.5.3 -fdebug-prefix-map=/opt/conda=/usr/local/src/conda-prefix -c stri_search_common.cpp -o stri_search_common.o

x86_64-conda-linux-gnu-c++ -std=gnu++17 -I"/opt/conda/lib/R/include" -DNDEBUG -I. -UDEBUG -DNDEBUG -DU_HAVE_ELF_H -Iicu74 -Iicu74/unicode -Iicu74/common -Iicu74/i18n -DU_STATIC_IMPLEMENTATION -DU_COMMON_IMPLEMENTATION -DU_I18N_IMPLEMENTATION -D_REENTRANT -DUCONFIG_USE_LOCAL -DNDEBUG -D_FORTIFY_SOURCE=2 -O2 -isystem /opt/conda/include -I/opt/conda/include -Wl,-rpath-link,/opt/conda/lib -fpic -fpic -fvisibility-inlines-hidden -fmessage-length=0 -march=nocona -mtune=haswell -ftree-vectorize -fPIC -fstack-protector-strong -fno-plt -O2 -ffunction-sections -pipe -isystem /opt/conda/include -fdebug-prefix-map=/home/conda/feedstock_root/build_artifacts/r-base-split_1773745610701/work=/usr/local/src/conda/r-base-4.5.3 -fdebug-prefix-map=/opt/conda=/usr/local/src/conda-prefix -c stri_search_coll_count.cpp -o stri_search_coll_count.o

x86_64-conda-linux-gnu-c++ -std=gnu++17 -I"/opt/conda/lib/R/include" -DNDEBUG -I. -UDEBUG -DNDEBUG -DU_HAVE_ELF_H -Iicu74 -Iicu74/unicode -Iicu74/common -Iicu74/i18n -DU_STATIC_IMPLEMENTATION -DU_COMMON_IMPLEMENTATION -DU_I18N_IMPLEMENTATION -D_REENTRANT -DUCONFIG_USE_LOCAL -DNDEBUG -D_FORTIFY_SOURCE=2 -O2 -isystem /opt/conda/include -I/opt/conda/include -Wl,-rpath-link,/opt/conda/lib -fpic -fpic -fvisibility-inlines-hidden -fmessage-length=0 -march=nocona -mtune=haswell -ftree-vectorize -fPIC -fstack-protector-strong -fno-plt -O2 -ffunction-sections -pipe -isystem /opt/conda/include -fdebug-prefix-map=/home/conda/feedstock_root/build_artifacts/r-base-split_1773745610701/work=/usr/local/src/conda/r-base-4.5.3 -fdebug-prefix-map=/opt/conda=/usr/local/src/conda-prefix -c stri_search_coll_detect.cpp -o stri_search_coll_detect.o

x86_64-conda-linux-gnu-c++ -std=gnu++17 -I"/opt/conda/lib/R/include" -DNDEBUG -I. -UDEBUG -DNDEBUG -DU_HAVE_ELF_H -Iicu74 -Iicu74/unicode -Iicu74/common -Iicu74/i18n -DU_STATIC_IMPLEMENTATION -DU_COMMON_IMPLEMENTATION -DU_I18N_IMPLEMENTATION -D_REENTRANT -DUCONFIG_USE_LOCAL -DNDEBUG -D_FORTIFY_SOURCE=2 -O2 -isystem /opt/conda/include -I/opt/conda/include -Wl,-rpath-link,/opt/conda/lib -fpic -fpic -fvisibility-inlines-hidden -fmessage-length=0 -march=nocona -mtune=haswell -ftree-vectorize -fPIC -fstack-protector-strong -fno-plt -O2 -ffunction-sections -pipe -isystem /opt/conda/include -fdebug-prefix-map=/home/conda/feedstock_root/build_artifacts/r-base-split_1773745610701/work=/usr/local/src/conda/r-base-4.5.3 -fdebug-prefix-map=/opt/conda=/usr/local/src/conda-prefix -c stri_search_coll_extract.cpp -o stri_search_coll_extract.o

x86_64-conda-linux-gnu-c++ -std=gnu++17 -I"/opt/conda/lib/R/include" -DNDEBUG -I. -UDEBUG -DNDEBUG -DU_HAVE_ELF_H -Iicu74 -Iicu74/unicode -Iicu74/common -Iicu74/i18n -DU_STATIC_IMPLEMENTATION -DU_COMMON_IMPLEMENTATION -DU_I18N_IMPLEMENTATION -D_REENTRANT -DUCONFIG_USE_LOCAL -DNDEBUG -D_FORTIFY_SOURCE=2 -O2 -isystem /opt/conda/include -I/opt/conda/include -Wl,-rpath-link,/opt/conda/lib -fpic -fpic -fvisibility-inlines-hidden -fmessage-length=0 -march=nocona -mtune=haswell -ftree-vectorize -fPIC -fstack-protector-strong -fno-plt -O2 -ffunction-sections -pipe -isystem /opt/conda/include -fdebug-prefix-map=/home/conda/feedstock_root/build_artifacts/r-base-split_1773745610701/work=/usr/local/src/conda/r-base-4.5.3 -fdebug-prefix-map=/opt/conda=/usr/local/src/conda-prefix -c stri_search_coll_locate.cpp -o stri_search_coll_locate.o

x86_64-conda-linux-gnu-c++ -std=gnu++17 -I"/opt/conda/lib/R/include" -DNDEBUG -I. -UDEBUG -DNDEBUG -DU_HAVE_ELF_H -Iicu74 -Iicu74/unicode -Iicu74/common -Iicu74/i18n -DU_STATIC_IMPLEMENTATION -DU_COMMON_IMPLEMENTATION -DU_I18N_IMPLEMENTATION -D_REENTRANT -DUCONFIG_USE_LOCAL -DNDEBUG -D_FORTIFY_SOURCE=2 -O2 -isystem /opt/conda/include -I/opt/conda/include -Wl,-rpath-link,/opt/conda/lib -fpic -fpic -fvisibility-inlines-hidden -fmessage-length=0 -march=nocona -mtune=haswell -ftree-vectorize -fPIC -fstack-protector-strong -fno-plt -O2 -ffunction-sections -pipe -isystem /opt/conda/include -fdebug-prefix-map=/home/conda/feedstock_root/build_artifacts/r-base-split_1773745610701/work=/usr/local/src/conda/r-base-4.5.3 -fdebug-prefix-map=/opt/conda=/usr/local/src/conda-prefix -c stri_search_coll_replace.cpp -o stri_search_coll_replace.o

x86_64-conda-linux-gnu-c++ -std=gnu++17 -I"/opt/conda/lib/R/include" -DNDEBUG -I. -UDEBUG -DNDEBUG -DU_HAVE_ELF_H -Iicu74 -Iicu74/unicode -Iicu74/common -Iicu74/i18n -DU_STATIC_IMPLEMENTATION -DU_COMMON_IMPLEMENTATION -DU_I18N_IMPLEMENTATION -D_REENTRANT -DUCONFIG_USE_LOCAL -DNDEBUG -D_FORTIFY_SOURCE=2 -O2 -isystem /opt/conda/include -I/opt/conda/include -Wl,-rpath-link,/opt/conda/lib -fpic -fpic -fvisibility-inlines-hidden -fmessage-length=0 -march=nocona -mtune=haswell -ftree-vectorize -fPIC -fstack-protector-strong -fno-plt -O2 -ffunction-sections -pipe -isystem /opt/conda/include -fdebug-prefix-map=/home/conda/feedstock_root/build_artifacts/r-base-split_1773745610701/work=/usr/local/src/conda/r-base-4.5.3 -fdebug-prefix-map=/opt/conda=/usr/local/src/conda-prefix -c stri_search_coll_split.cpp -o stri_search_coll_split.o

x86_64-conda-linux-gnu-c++ -std=gnu++17 -I"/opt/conda/lib/R/include" -DNDEBUG -I. -UDEBUG -DNDEBUG -DU_HAVE_ELF_H -Iicu74 -Iicu74/unicode -Iicu74/common -Iicu74/i18n -DU_STATIC_IMPLEMENTATION -DU_COMMON_IMPLEMENTATION -DU_I18N_IMPLEMENTATION -D_REENTRANT -DUCONFIG_USE_LOCAL -DNDEBUG -D_FORTIFY_SOURCE=2 -O2 -isystem /opt/conda/include -I/opt/conda/include -Wl,-rpath-link,/opt/conda/lib -fpic -fpic -fvisibility-inlines-hidden -fmessage-length=0 -march=nocona -mtune=haswell -ftree-vectorize -fPIC -fstack-protector-strong -fno-plt -O2 -ffunction-sections -pipe -isystem /opt/conda/include -fdebug-prefix-map=/home/conda/feedstock_root/build_artifacts/r-base-split_1773745610701/work=/usr/local/src/conda/r-base-4.5.3 -fdebug-prefix-map=/opt/conda=/usr/local/src/conda-prefix -c stri_search_coll_startsendswith.cpp -o stri_search_coll_startsendswith.o

x86_64-conda-linux-gnu-c++ -std=gnu++17 -I"/opt/conda/lib/R/include" -DNDEBUG -I. -UDEBUG -DNDEBUG -DU_HAVE_ELF_H -Iicu74 -Iicu74/unicode -Iicu74/common -Iicu74/i18n -DU_STATIC_IMPLEMENTATION -DU_COMMON_IMPLEMENTATION -DU_I18N_IMPLEMENTATION -D_REENTRANT -DUCONFIG_USE_LOCAL -DNDEBUG -D_FORTIFY_SOURCE=2 -O2 -isystem /opt/conda/include -I/opt/conda/include -Wl,-rpath-link,/opt/conda/lib -fpic -fpic -fvisibility-inlines-hidden -fmessage-length=0 -march=nocona -mtune=haswell -ftree-vectorize -fPIC -fstack-protector-strong -fno-plt -O2 -ffunction-sections -pipe -isystem /opt/conda/include -fdebug-prefix-map=/home/conda/feedstock_root/build_artifacts/r-base-split_1773745610701/work=/usr/local/src/conda/r-base-4.5.3 -fdebug-prefix-map=/opt/conda=/usr/local/src/conda-prefix -c stri_search_coll_subset.cpp -o stri_search_coll_subset.o

x86_64-conda-linux-gnu-c++ -std=gnu++17 -I"/opt/conda/lib/R/include" -DNDEBUG -I. -UDEBUG -DNDEBUG -DU_HAVE_ELF_H -Iicu74 -Iicu74/unicode -Iicu74/common -Iicu74/i18n -DU_STATIC_IMPLEMENTATION -DU_COMMON_IMPLEMENTATION -DU_I18N_IMPLEMENTATION -D_REENTRANT -DUCONFIG_USE_LOCAL -DNDEBUG -D_FORTIFY_SOURCE=2 -O2 -isystem /opt/conda/include -I/opt/conda/include -Wl,-rpath-link,/opt/conda/lib -fpic -fpic -fvisibility-inlines-hidden -fmessage-length=0 -march=nocona -mtune=haswell -ftree-vectorize -fPIC -fstack-protector-strong -fno-plt -O2 -ffunction-sections -pipe -isystem /opt/conda/include -fdebug-prefix-map=/home/conda/feedstock_root/build_artifacts/r-base-split_1773745610701/work=/usr/local/src/conda/r-base-4.5.3 -fdebug-prefix-map=/opt/conda=/usr/local/src/conda-prefix -c stri_search_boundaries_count.cpp -o stri_search_boundaries_count.o

x86_64-conda-linux-gnu-c++ -std=gnu++17 -I"/opt/conda/lib/R/include" -DNDEBUG -I. -UDEBUG -DNDEBUG -DU_HAVE_ELF_H -Iicu74 -Iicu74/unicode -Iicu74/common -Iicu74/i18n -DU_STATIC_IMPLEMENTATION -DU_COMMON_IMPLEMENTATION -DU_I18N_IMPLEMENTATION -D_REENTRANT -DUCONFIG_USE_LOCAL -DNDEBUG -D_FORTIFY_SOURCE=2 -O2 -isystem /opt/conda/include -I/opt/conda/include -Wl,-rpath-link,/opt/conda/lib -fpic -fpic -fvisibility-inlines-hidden -fmessage-length=0 -march=nocona -mtune=haswell -ftree-vectorize -fPIC -fstack-protector-strong -fno-plt -O2 -ffunction-sections -pipe -isystem /opt/conda/include -fdebug-prefix-map=/home/conda/feedstock_root/build_artifacts/r-base-split_1773745610701/work=/usr/local/src/conda/r-base-4.5.3 -fdebug-prefix-map=/opt/conda=/usr/local/src/conda-prefix -c stri_search_boundaries_extract.cpp -o stri_search_boundaries_extract.o

x86_64-conda-linux-gnu-c++ -std=gnu++17 -I"/opt/conda/lib/R/include" -DNDEBUG -I. -UDEBUG -DNDEBUG -DU_HAVE_ELF_H -Iicu74 -Iicu74/unicode -Iicu74/common -Iicu74/i18n -DU_STATIC_IMPLEMENTATION -DU_COMMON_IMPLEMENTATION -DU_I18N_IMPLEMENTATION -D_REENTRANT -DUCONFIG_USE_LOCAL -DNDEBUG -D_FORTIFY_SOURCE=2 -O2 -isystem /opt/conda/include -I/opt/conda/include -Wl,-rpath-link,/opt/conda/lib -fpic -fpic -fvisibility-inlines-hidden -fmessage-length=0 -march=nocona -mtune=haswell -ftree-vectorize -fPIC -fstack-protector-strong -fno-plt -O2 -ffunction-sections -pipe -isystem /opt/conda/include -fdebug-prefix-map=/home/conda/feedstock_root/build_artifacts/r-base-split_1773745610701/work=/usr/local/src/conda/r-base-4.5.3 -fdebug-prefix-map=/opt/conda=/usr/local/src/conda-prefix -c stri_search_boundaries_locate.cpp -o stri_search_boundaries_locate.o

x86_64-conda-linux-gnu-c++ -std=gnu++17 -I"/opt/conda/lib/R/include" -DNDEBUG -I. -UDEBUG -DNDEBUG -DU_HAVE_ELF_H -Iicu74 -Iicu74/unicode -Iicu74/common -Iicu74/i18n -DU_STATIC_IMPLEMENTATION -DU_COMMON_IMPLEMENTATION -DU_I18N_IMPLEMENTATION -D_REENTRANT -DUCONFIG_USE_LOCAL -DNDEBUG -D_FORTIFY_SOURCE=2 -O2 -isystem /opt/conda/include -I/opt/conda/include -Wl,-rpath-link,/opt/conda/lib -fpic -fpic -fvisibility-inlines-hidden -fmessage-length=0 -march=nocona -mtune=haswell -ftree-vectorize -fPIC -fstack-protector-strong -fno-plt -O2 -ffunction-sections -pipe -isystem /opt/conda/include -fdebug-prefix-map=/home/conda/feedstock_root/build_artifacts/r-base-split_1773745610701/work=/usr/local/src/conda/r-base-4.5.3 -fdebug-prefix-map=/opt/conda=/usr/local/src/conda-prefix -c stri_search_boundaries_split.cpp -o stri_search_boundaries_split.o

x86_64-conda-linux-gnu-c++ -std=gnu++17 -I"/opt/conda/lib/R/include" -DNDEBUG -I. -UDEBUG -DNDEBUG -DU_HAVE_ELF_H -Iicu74 -Iicu74/unicode -Iicu74/common -Iicu74/i18n -DU_STATIC_IMPLEMENTATION -DU_COMMON_IMPLEMENTATION -DU_I18N_IMPLEMENTATION -D_REENTRANT -DUCONFIG_USE_LOCAL -DNDEBUG -D_FORTIFY_SOURCE=2 -O2 -isystem /opt/conda/include -I/opt/conda/include -Wl,-rpath-link,/opt/conda/lib -fpic -fpic -fvisibility-inlines-hidden -fmessage-length=0 -march=nocona -mtune=haswell -ftree-vectorize -fPIC -fstack-protector-strong -fno-plt -O2 -ffunction-sections -pipe -isystem /opt/conda/include -fdebug-prefix-map=/home/conda/feedstock_root/build_artifacts/r-base-split_1773745610701/work=/usr/local/src/conda/r-base-4.5.3 -fdebug-prefix-map=/opt/conda=/usr/local/src/conda-prefix -c stri_search_fixed_count.cpp -o stri_search_fixed_count.o

x86_64-conda-linux-gnu-c++ -std=gnu++17 -I"/opt/conda/lib/R/include" -DNDEBUG -I. -UDEBUG -DNDEBUG -DU_HAVE_ELF_H -Iicu74 -Iicu74/unicode -Iicu74/common -Iicu74/i18n -DU_STATIC_IMPLEMENTATION -DU_COMMON_IMPLEMENTATION -DU_I18N_IMPLEMENTATION -D_REENTRANT -DUCONFIG_USE_LOCAL -DNDEBUG -D_FORTIFY_SOURCE=2 -O2 -isystem /opt/conda/include -I/opt/conda/include -Wl,-rpath-link,/opt/conda/lib -fpic -fpic -fvisibility-inlines-hidden -fmessage-length=0 -march=nocona -mtune=haswell -ftree-vectorize -fPIC -fstack-protector-strong -fno-plt -O2 -ffunction-sections -pipe -isystem /opt/conda/include -fdebug-prefix-map=/home/conda/feedstock_root/build_artifacts/r-base-split_1773745610701/work=/usr/local/src/conda/r-base-4.5.3 -fdebug-prefix-map=/opt/conda=/usr/local/src/conda-prefix -c stri_search_fixed_detect.cpp -o stri_search_fixed_detect.o

x86_64-conda-linux-gnu-c++ -std=gnu++17 -I"/opt/conda/lib/R/include" -DNDEBUG -I. -UDEBUG -DNDEBUG -DU_HAVE_ELF_H -Iicu74 -Iicu74/unicode -Iicu74/common -Iicu74/i18n -DU_STATIC_IMPLEMENTATION -DU_COMMON_IMPLEMENTATION -DU_I18N_IMPLEMENTATION -D_REENTRANT -DUCONFIG_USE_LOCAL -DNDEBUG -D_FORTIFY_SOURCE=2 -O2 -isystem /opt/conda/include -I/opt/conda/include -Wl,-rpath-link,/opt/conda/lib -fpic -fpic -fvisibility-inlines-hidden -fmessage-length=0 -march=nocona -mtune=haswell -ftree-vectorize -fPIC -fstack-protector-strong -fno-plt -O2 -ffunction-sections -pipe -isystem /opt/conda/include -fdebug-prefix-map=/home/conda/feedstock_root/build_artifacts/r-base-split_1773745610701/work=/usr/local/src/conda/r-base-4.5.3 -fdebug-prefix-map=/opt/conda=/usr/local/src/conda-prefix -c stri_search_fixed_extract.cpp -o stri_search_fixed_extract.o

x86_64-conda-linux-gnu-c++ -std=gnu++17 -I"/opt/conda/lib/R/include" -DNDEBUG -I. -UDEBUG -DNDEBUG -DU_HAVE_ELF_H -Iicu74 -Iicu74/unicode -Iicu74/common -Iicu74/i18n -DU_STATIC_IMPLEMENTATION -DU_COMMON_IMPLEMENTATION -DU_I18N_IMPLEMENTATION -D_REENTRANT -DUCONFIG_USE_LOCAL -DNDEBUG -D_FORTIFY_SOURCE=2 -O2 -isystem /opt/conda/include -I/opt/conda/include -Wl,-rpath-link,/opt/conda/lib -fpic -fpic -fvisibility-inlines-hidden -fmessage-length=0 -march=nocona -mtune=haswell -ftree-vectorize -fPIC -fstack-protector-strong -fno-plt -O2 -ffunction-sections -pipe -isystem /opt/conda/include -fdebug-prefix-map=/home/conda/feedstock_root/build_artifacts/r-base-split_1773745610701/work=/usr/local/src/conda/r-base-4.5.3 -fdebug-prefix-map=/opt/conda=/usr/local/src/conda-prefix -c stri_search_fixed_locate.cpp -o stri_search_fixed_locate.o

x86_64-conda-linux-gnu-c++ -std=gnu++17 -I"/opt/conda/lib/R/include" -DNDEBUG -I. -UDEBUG -DNDEBUG -DU_HAVE_ELF_H -Iicu74 -Iicu74/unicode -Iicu74/common -Iicu74/i18n -DU_STATIC_IMPLEMENTATION -DU_COMMON_IMPLEMENTATION -DU_I18N_IMPLEMENTATION -D_REENTRANT -DUCONFIG_USE_LOCAL -DNDEBUG -D_FORTIFY_SOURCE=2 -O2 -isystem /opt/conda/include -I/opt/conda/include -Wl,-rpath-link,/opt/conda/lib -fpic -fpic -fvisibility-inlines-hidden -fmessage-length=0 -march=nocona -mtune=haswell -ftree-vectorize -fPIC -fstack-protector-strong -fno-plt -O2 -ffunction-sections -pipe -isystem /opt/conda/include -fdebug-prefix-map=/home/conda/feedstock_root/build_artifacts/r-base-split_1773745610701/work=/usr/local/src/conda/r-base-4.5.3 -fdebug-prefix-map=/opt/conda=/usr/local/src/conda-prefix -c stri_search_fixed_replace.cpp -o stri_search_fixed_replace.o

x86_64-conda-linux-gnu-c++ -std=gnu++17 -I"/opt/conda/lib/R/include" -DNDEBUG -I. -UDEBUG -DNDEBUG -DU_HAVE_ELF_H -Iicu74 -Iicu74/unicode -Iicu74/common -Iicu74/i18n -DU_STATIC_IMPLEMENTATION -DU_COMMON_IMPLEMENTATION -DU_I18N_IMPLEMENTATION -D_REENTRANT -DUCONFIG_USE_LOCAL -DNDEBUG -D_FORTIFY_SOURCE=2 -O2 -isystem /opt/conda/include -I/opt/conda/include -Wl,-rpath-link,/opt/conda/lib -fpic -fpic -fvisibility-inlines-hidden -fmessage-length=0 -march=nocona -mtune=haswell -ftree-vectorize -fPIC -fstack-protector-strong -fno-plt -O2 -ffunction-sections -pipe -isystem /opt/conda/include -fdebug-prefix-map=/home/conda/feedstock_root/build_artifacts/r-base-split_1773745610701/work=/usr/local/src/conda/r-base-4.5.3 -fdebug-prefix-map=/opt/conda=/usr/local/src/conda-prefix -c stri_search_fixed_split.cpp -o stri_search_fixed_split.o

x86_64-conda-linux-gnu-c++ -std=gnu++17 -I"/opt/conda/lib/R/include" -DNDEBUG -I. -UDEBUG -DNDEBUG -DU_HAVE_ELF_H -Iicu74 -Iicu74/unicode -Iicu74/common -Iicu74/i18n -DU_STATIC_IMPLEMENTATION -DU_COMMON_IMPLEMENTATION -DU_I18N_IMPLEMENTATION -D_REENTRANT -DUCONFIG_USE_LOCAL -DNDEBUG -D_FORTIFY_SOURCE=2 -O2 -isystem /opt/conda/include -I/opt/conda/include -Wl,-rpath-link,/opt/conda/lib -fpic -fpic -fvisibility-inlines-hidden -fmessage-length=0 -march=nocona -mtune=haswell -ftree-vectorize -fPIC -fstack-protector-strong -fno-plt -O2 -ffunction-sections -pipe -isystem /opt/conda/include -fdebug-prefix-map=/home/conda/feedstock_root/build_artifacts/r-base-split_1773745610701/work=/usr/local/src/conda/r-base-4.5.3 -fdebug-prefix-map=/opt/conda=/usr/local/src/conda-prefix -c stri_search_fixed_subset.cpp -o stri_search_fixed_subset.o

x86_64-conda-linux-gnu-c++ -std=gnu++17 -I"/opt/conda/lib/R/include" -DNDEBUG -I. -UDEBUG -DNDEBUG -DU_HAVE_ELF_H -Iicu74 -Iicu74/unicode -Iicu74/common -Iicu74/i18n -DU_STATIC_IMPLEMENTATION -DU_COMMON_IMPLEMENTATION -DU_I18N_IMPLEMENTATION -D_REENTRANT -DUCONFIG_USE_LOCAL -DNDEBUG -D_FORTIFY_SOURCE=2 -O2 -isystem /opt/conda/include -I/opt/conda/include -Wl,-rpath-link,/opt/conda/lib -fpic -fpic -fvisibility-inlines-hidden -fmessage-length=0 -march=nocona -mtune=haswell -ftree-vectorize -fPIC -fstack-protector-strong -fno-plt -O2 -ffunction-sections -pipe -isystem /opt/conda/include -fdebug-prefix-map=/home/conda/feedstock_root/build_artifacts/r-base-split_1773745610701/work=/usr/local/src/conda/r-base-4.5.3 -fdebug-prefix-map=/opt/conda=/usr/local/src/conda-prefix -c stri_search_fixed_startsendswith.cpp -o stri_search_fixed_startsendswith.o

x86_64-conda-linux-gnu-c++ -std=gnu++17 -I"/opt/conda/lib/R/include" -DNDEBUG -I. -UDEBUG -DNDEBUG -DU_HAVE_ELF_H -Iicu74 -Iicu74/unicode -Iicu74/common -Iicu74/i18n -DU_STATIC_IMPLEMENTATION -DU_COMMON_IMPLEMENTATION -DU_I18N_IMPLEMENTATION -D_REENTRANT -DUCONFIG_USE_LOCAL -DNDEBUG -D_FORTIFY_SOURCE=2 -O2 -isystem /opt/conda/include -I/opt/conda/include -Wl,-rpath-link,/opt/conda/lib -fpic -fpic -fvisibility-inlines-hidden -fmessage-length=0 -march=nocona -mtune=haswell -ftree-vectorize -fPIC -fstack-protector-strong -fno-plt -O2 -ffunction-sections -pipe -isystem /opt/conda/include -fdebug-prefix-map=/home/conda/feedstock_root/build_artifacts/r-base-split_1773745610701/work=/usr/local/src/conda/r-base-4.5.3 -fdebug-prefix-map=/opt/conda=/usr/local/src/conda-prefix -c stri_search_in.cpp -o stri_search_in.o

x86_64-conda-linux-gnu-c++ -std=gnu++17 -I"/opt/conda/lib/R/include" -DNDEBUG -I. -UDEBUG -DNDEBUG -DU_HAVE_ELF_H -Iicu74 -Iicu74/unicode -Iicu74/common -Iicu74/i18n -DU_STATIC_IMPLEMENTATION -DU_COMMON_IMPLEMENTATION -DU_I18N_IMPLEMENTATION -D_REENTRANT -DUCONFIG_USE_LOCAL -DNDEBUG -D_FORTIFY_SOURCE=2 -O2 -isystem /opt/conda/include -I/opt/conda/include -Wl,-rpath-link,/opt/conda/lib -fpic -fpic -fvisibility-inlines-hidden -fmessage-length=0 -march=nocona -mtune=haswell -ftree-vectorize -fPIC -fstack-protector-strong -fno-plt -O2 -ffunction-sections -pipe -isystem /opt/conda/include -fdebug-prefix-map=/home/conda/feedstock_root/build_artifacts/r-base-split_1773745610701/work=/usr/local/src/conda/r-base-4.5.3 -fdebug-prefix-map=/opt/conda=/usr/local/src/conda-prefix -c stri_search_other_split.cpp -o stri_search_other_split.o

x86_64-conda-linux-gnu-c++ -std=gnu++17 -I"/opt/conda/lib/R/include" -DNDEBUG -I. -UDEBUG -DNDEBUG -DU_HAVE_ELF_H -Iicu74 -Iicu74/unicode -Iicu74/common -Iicu74/i18n -DU_STATIC_IMPLEMENTATION -DU_COMMON_IMPLEMENTATION -DU_I18N_IMPLEMENTATION -D_REENTRANT -DUCONFIG_USE_LOCAL -DNDEBUG -D_FORTIFY_SOURCE=2 -O2 -isystem /opt/conda/include -I/opt/conda/include -Wl,-rpath-link,/opt/conda/lib -fpic -fpic -fvisibility-inlines-hidden -fmessage-length=0 -march=nocona -mtune=haswell -ftree-vectorize -fPIC -fstack-protector-strong -fno-plt -O2 -ffunction-sections -pipe -isystem /opt/conda/include -fdebug-prefix-map=/home/conda/feedstock_root/build_artifacts/r-base-split_1773745610701/work=/usr/local/src/conda/r-base-4.5.3 -fdebug-prefix-map=/opt/conda=/usr/local/src/conda-prefix -c stri_search_regex_count.cpp -o stri_search_regex_count.o

x86_64-conda-linux-gnu-c++ -std=gnu++17 -I"/opt/conda/lib/R/include" -DNDEBUG -I. -UDEBUG -DNDEBUG -DU_HAVE_ELF_H -Iicu74 -Iicu74/unicode -Iicu74/common -Iicu74/i18n -DU_STATIC_IMPLEMENTATION -DU_COMMON_IMPLEMENTATION -DU_I18N_IMPLEMENTATION -D_REENTRANT -DUCONFIG_USE_LOCAL -DNDEBUG -D_FORTIFY_SOURCE=2 -O2 -isystem /opt/conda/include -I/opt/conda/include -Wl,-rpath-link,/opt/conda/lib -fpic -fpic -fvisibility-inlines-hidden -fmessage-length=0 -march=nocona -mtune=haswell -ftree-vectorize -fPIC -fstack-protector-strong -fno-plt -O2 -ffunction-sections -pipe -isystem /opt/conda/include -fdebug-prefix-map=/home/conda/feedstock_root/build_artifacts/r-base-split_1773745610701/work=/usr/local/src/conda/r-base-4.5.3 -fdebug-prefix-map=/opt/conda=/usr/local/src/conda-prefix -c stri_search_regex_detect.cpp -o stri_search_regex_detect.o

x86_64-conda-linux-gnu-c++ -std=gnu++17 -I"/opt/conda/lib/R/include" -DNDEBUG -I. -UDEBUG -DNDEBUG -DU_HAVE_ELF_H -Iicu74 -Iicu74/unicode -Iicu74/common -Iicu74/i18n -DU_STATIC_IMPLEMENTATION -DU_COMMON_IMPLEMENTATION -DU_I18N_IMPLEMENTATION -D_REENTRANT -DUCONFIG_USE_LOCAL -DNDEBUG -D_FORTIFY_SOURCE=2 -O2 -isystem /opt/conda/include -I/opt/conda/include -Wl,-rpath-link,/opt/conda/lib -fpic -fpic -fvisibility-inlines-hidden -fmessage-length=0 -march=nocona -mtune=haswell -ftree-vectorize -fPIC -fstack-protector-strong -fno-plt -O2 -ffunction-sections -pipe -isystem /opt/conda/include -fdebug-prefix-map=/home/conda/feedstock_root/build_artifacts/r-base-split_1773745610701/work=/usr/local/src/conda/r-base-4.5.3 -fdebug-prefix-map=/opt/conda=/usr/local/src/conda-prefix -c stri_search_regex_extract.cpp -o stri_search_regex_extract.o

x86_64-conda-linux-gnu-c++ -std=gnu++17 -I"/opt/conda/lib/R/include" -DNDEBUG -I. -UDEBUG -DNDEBUG -DU_HAVE_ELF_H -Iicu74 -Iicu74/unicode -Iicu74/common -Iicu74/i18n -DU_STATIC_IMPLEMENTATION -DU_COMMON_IMPLEMENTATION -DU_I18N_IMPLEMENTATION -D_REENTRANT -DUCONFIG_USE_LOCAL -DNDEBUG -D_FORTIFY_SOURCE=2 -O2 -isystem /opt/conda/include -I/opt/conda/include -Wl,-rpath-link,/opt/conda/lib -fpic -fpic -fvisibility-inlines-hidden -fmessage-length=0 -march=nocona -mtune=haswell -ftree-vectorize -fPIC -fstack-protector-strong -fno-plt -O2 -ffunction-sections -pipe -isystem /opt/conda/include -fdebug-prefix-map=/home/conda/feedstock_root/build_artifacts/r-base-split_1773745610701/work=/usr/local/src/conda/r-base-4.5.3 -fdebug-prefix-map=/opt/conda=/usr/local/src/conda-prefix -c stri_search_regex_locate.cpp -o stri_search_regex_locate.o

x86_64-conda-linux-gnu-c++ -std=gnu++17 -I"/opt/conda/lib/R/include" -DNDEBUG -I. -UDEBUG -DNDEBUG -DU_HAVE_ELF_H -Iicu74 -Iicu74/unicode -Iicu74/common -Iicu74/i18n -DU_STATIC_IMPLEMENTATION -DU_COMMON_IMPLEMENTATION -DU_I18N_IMPLEMENTATION -D_REENTRANT -DUCONFIG_USE_LOCAL -DNDEBUG -D_FORTIFY_SOURCE=2 -O2 -isystem /opt/conda/include -I/opt/conda/include -Wl,-rpath-link,/opt/conda/lib -fpic -fpic -fvisibility-inlines-hidden -fmessage-length=0 -march=nocona -mtune=haswell -ftree-vectorize -fPIC -fstack-protector-strong -fno-plt -O2 -ffunction-sections -pipe -isystem /opt/conda/include -fdebug-prefix-map=/home/conda/feedstock_root/build_artifacts/r-base-split_1773745610701/work=/usr/local/src/conda/r-base-4.5.3 -fdebug-prefix-map=/opt/conda=/usr/local/src/conda-prefix -c stri_search_regex_match.cpp -o stri_search_regex_match.o